Table of Contents

- The Siri Problem Apple Couldn’t Ignore Anymore

- What Apple and Google Actually Announced on January 12

- The Money: How Much Apple Is Paying (And Why That Number Is Surprising)

- Why Not OpenAI? Why Not Anthropic?

- The Six Features Coming to Siri in 2026

- Where Things Actually Stand Right Now: iOS 26.4 and the Rollout

- The Privacy Architecture: What Apple Confirmed and What It Hasn’t

- What This Deal Actually Says About Apple’s Position in AI

- Final Verdict

The Siri Problem Apple Couldn’t Ignore Anymore

At WWDC 2024, Apple showed a version of Siri that looked genuinely different from anything it had shipped before. It could read your emails, cross-reference your calendar, understand what was on your screen, and chain tasks across multiple apps without you having to explain anything. The demos were specific and convincing.

Then iOS 18 shipped without those features. Then iOS 18.1. Then 18.2. Then 18.3. Apple ran national TV ads showing the features regardless. People bought iPhone 16s partly because of what those ads promised. The features still weren’t there. Apple eventually pulled the ads, and class-action lawsuits followed from customers who argued Apple had advertised capabilities that didn’t exist at the time of purchase.

By late 2025, it was clear inside Apple that its own AI models weren’t going to close the gap on a timeline anyone could accept. That is the context you need to understand why Apple, a company built on the principle of making everything itself, signed a multi-year deal to put Google’s technology at the center of Siri.

What Apple and Google Actually Announced on January 12

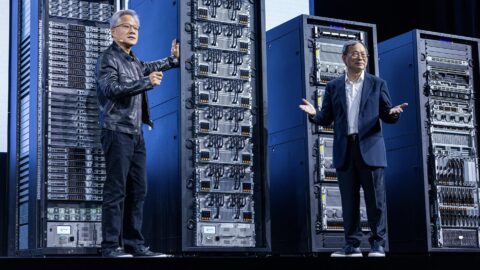

On January 12, 2026, Apple and Google released a joint statement through CNBC confirming a multi-year partnership. The relevant passage: “Apple and Google have entered into a multi-year collaboration under which the next generation of Apple Foundation Models will be based on Google’s Gemini models and cloud technology. These models will help power future Apple Intelligence features, including a more personalized Siri coming this year.”

Apple’s own statement added: “After careful evaluation, we determined that Google’s technology provides the most capable foundation for Apple Foundation Models and we’re excited about the innovative new experiences it will unlock for our users.”

Tim Cook addressed it directly on Apple’s Q1 2026 earnings call: “We basically determined that Google’s AI technology would provide the most capable foundation for AFM, and we believe that we can unlock a lot of experiences and innovate in a key way due to the collaboration. We’ll continue to run on the device and run in Private Cloud Compute and maintain our industry-leading privacy standards in doing so.”

Notice what Cook didn’t say. He didn’t say Apple’s models were good. He said Google’s were “the most capable foundation.” That’s a specific and deliberate word choice from someone who spent years saying Apple does everything better in-house.

The Information also confirmed that internal Siri prototypes show no Google or Gemini branding anywhere. From the user’s perspective it still looks and responds as Siri. Apple retained the right to fine-tune Gemini’s outputs so responses stay consistent with Apple’s own guidelines and tone.

The Money: How Much Apple Is Paying (And Why That Number Is Surprising)

Bloomberg first reported the deal is worth approximately $1 billion annually to Google. The Financial Times described it as a “multi-billion dollar” cloud computing contract in total. Analyst Gene Munster at Deepwater Asset Management put the full estimated value at around $5 billion for Google over the deal’s life.

Bloomberg’s earlier reporting noted the arrangement includes access to a custom 1.2-trillion-parameter Gemini model built specifically for Apple. Apple’s own in-house models are considerably smaller. That parameter gap is the clearest public indicator of the capability difference that pushed Apple toward this deal.

The structural irony, as MacRumors pointed out at the time, is that this arrangement mirrors the existing deal running in the opposite direction: Google pays Apple several billion dollars annually to be the iPhone’s default search engine. Both companies are now significant revenue sources for each other simultaneously, which makes their competitive relationship considerably more complicated.

Why Not OpenAI? Why Not Anthropic?

Apple didn’t go straight to Google. According to 9to5Mac citing Bloomberg’s reporting, talks with Anthropic broke down around August 2025 over cost. Anthropic was asking for several billion dollars annually over multiple years, a price Apple wasn’t willing to pay.

OpenAI is a more complicated story. Reports from 9to5Mac citing the Financial Times suggested OpenAI may have declined the opportunity to power Siri, at least in part because the company was already committed to its own hardware project in collaboration with former Apple design chief Jony Ive. The precise nature of those discussions hasn’t been confirmed on the record by either company.

ChatGPT remains integrated into Siri as an opt-in fallback for certain query types under the 2024 agreement between Apple and OpenAI. Apple has not announced any changes to that arrangement. As the Gemini-powered Siri matures and becomes capable enough to handle more of those queries natively, the ChatGPT integration will likely see less use over time without any formal announcement being required.

The Six Features Coming to Siri in 2026

Apple announced three capability areas at WWDC 2024. The Information added three more in reporting published the day after the January partnership announcement. Together these represent what the Gemini-powered Siri is being built to do:

| Feature | What It Actually Does | Source |

|---|---|---|

| Personal context awareness | Siri reads your emails, messages, and calendar to answer questions about your own life. Apple’s WWDC demo showed a user asking about a family member’s flight and lunch plans, with Siri pulling the answer directly from Mail and Messages. | Apple WWDC 2024, joint statement Jan 2026 |

| On-screen awareness | Siri sees whatever is currently displayed on your screen and acts on it without you describing what’s there | Apple WWDC 2024 |

| Deeper per-app controls | Siri completes tasks inside third-party apps, not just Apple’s own, expanding what it can act on considerably | Apple WWDC 2024 |

| Conversational Q&A and task handling | More capable responses to knowledge questions, plus the ability to assist with travel booking and creating documents in Notes | The Information, January 13, 2026 |

| Conversation memory | Siri retains context from past conversations rather than starting fresh every time | The Information, January 13, 2026 |

| Proactive suggestions | Siri surfaces relevant information from Calendar and other apps without being asked first | The Information, January 13, 2026 |

The first three are targeting the spring 2026 iOS updates. The Information’s reporting indicated conversation memory and proactive suggestions are planned for announcement at WWDC in June, putting them on track for iOS 27 in September alongside new iPhone hardware.

Where Things Actually Stand Right Now: iOS 26.4 and the Rollout

As of March 13, 2026, the current stable iOS version is 26.3.1, released March 4. That update focused on bug fixes and added Studio Display support for USB-C iPhones. It contained no Gemini-powered Siri features.

iOS 26.4 is in beta testing, currently at beta 4. Bloomberg’s Mark Gurman reported in late January that Apple was targeting a spring 2026 debut for the first set of Siri features, with iOS 26.4 as the expected vehicle. However, Gurman also noted in February that internal testing had hit snags, with some features potentially shifting to iOS 26.5 in May or later in the year. Apple stock dropped roughly 5 percent the day that report published.

Apple’s public position, confirmed to CNBC after the Bloomberg delay report, is that the revamped Siri is still coming in 2026. The company has not committed to a specific iOS version number publicly. Its January joint statement said only “this year.”

- Personal context, on-screen awareness, and per-app controls: targeting iOS 26.4 (spring), possible slip to iOS 26.5

- Conversation memory and proactive suggestions: expected at WWDC in June, likely shipping with iOS 27 in September

- Apple’s firm public commitment: all features arrive at some point in 2026

The pattern here matters. Apple has moved this timeline multiple times since WWDC 2024. The features are real and confirmed by multiple credible reporters. The specific dates have proven harder to hold than Apple initially suggested.

The Privacy Architecture: What Apple Confirmed and What It Hasn’t

Both Apple and Google confirmed in their joint statement that Gemini will run on Apple’s Private Cloud Compute infrastructure. This means the model processes data on Apple’s servers, not Google’s. Tim Cook reinforced this specifically on the earnings call. The arrangement was described by 9to5Mac as non-exclusive and non-locking: Apple can blend its own models with Google’s and is free to shift the balance as its internal capabilities develop.

What Apple has not published is a detailed technical document explaining the exact architecture of how the separation between Apple’s infrastructure and Google’s model is maintained. Apple’s statement that it “maintains industry-leading privacy standards” is a commitment rather than a specification. No independent security audit of the arrangement has been published.

Three things are worth keeping in mind on the privacy question:

- Apple has a strong financial and reputational incentive to make the privacy architecture work. A breach of that promise would be catastrophic for the brand in a way that a delayed feature release is not.

- The processing happens on Apple’s servers rather than Google’s, which is a meaningful structural difference from simply sending your data to Google directly.

- For users who chose iPhone specifically to minimize their data exposure to Google’s ecosystem, the full technical picture needs to be published before that concern can be evaluated properly. It hasn’t been yet.

What This Deal Actually Says About Apple’s Position in AI

The Financial Times quoted a former Apple executive describing the Google deal as “a necessary byproduct of Apple’s decision not to go big on its AI investments like its competitors.” The numbers give that quote context. Apple spent approximately $12.7 billion on capital expenditure in fiscal 2025. Google is projected to spend around $90 billion on AI infrastructure in 2026 alone. These are different scales of commitment to the underlying infrastructure.

Apple’s counterargument, visible in how it structured the deal, is that controlling the interface, the privacy layer, and the user relationship matters more than owning the foundation model. 9to5Mac’s reporting described the arrangement as leaving Apple free to shift toward its own models over time without being locked in. That flexibility is presumably the point: use Google’s infrastructure as a bridge while continuing to develop internal capabilities.

Bloomberg reported that the deal faced real internal resistance. Siri executive Mike Rockwell reportedly told colleagues in an internal meeting that earlier Bloomberg reporting about Apple considering outside AI models was “BS.” He was wrong about that, but the pushback signals how significant the shift was for a company that built its identity on vertical integration. The practical pressure of shipping something real after two years of missed commitments ultimately outweighed the philosophical position.

Apple also made a notable hire before the Google deal became public: Amar Subramanya, a lead engineer on Google’s Gemini project, joined Apple in 2025. Whether that hire was part of negotiating the partnership or a parallel move to build internal Gemini expertise is not confirmed. Either way, the company is clearly learning from the people who built the model it’s now depending on.

Final Verdict

The Apple-Google Gemini partnership is confirmed, substantial, and represents the most significant structural change to Siri since its launch in 2011. The features being built on top of it are real, well-reported, and meaningfully different from what current Siri can do. The specific timing of when each feature ships has slipped before and could slip again.

If you have an iPhone 15 Pro, iPhone 16, or any device with Apple Intelligence support, something genuinely different is coming to Siri in 2026. The original WWDC 2024 demo of Siri cross-referencing a family member’s flight details across Mail and Messages is the clearest public illustration of the capability gap being closed. That demo is now backed by a confirmed infrastructure deal and multiple credible reports about features in testing.

If you bought an iPhone 16 in 2024 based on the ads Apple has since pulled, you have been waiting for over a year and a half. The features are closer than they have ever been. Whether iOS 26.4 this spring is the moment or whether parts of it slip to iOS 27 in September, the more honest expectation is that 2026 is the year this actually ships in some meaningful form. WWDC in June will be the clearest indicator of where things stand.

What feature on that list are you actually waiting on? Personal context, on-screen awareness, or conversation memory? Drop it in the comments. The answer probably varies a lot depending on how you actually use Siri day to day.

Apple built its reputation on making everything itself. The Gemini deal is the clearest sign yet of what that philosophy costs when the rest of the industry moves faster.

Whether 2026 is when Siri catches up is the most important product question Apple is facing right now.

Sources for this article: Bloomberg (Mark Gurman), The Information, Financial Times, 9to5Mac, MacRumors, and Apple’s official statements. Every specific claim is attributed to a named publication or primary source.

Leave a Reply