Table of Contents

- DevOps Grew Up. Here Is What That Actually Looks Like.

- Serverless in 2026: The “It Depends” Answer Finally Has Clarity

- GitOps: Why Git Became the Most Important Ops Tool Nobody Called an Ops Tool

- FinOps: The Trend That Exists Because Cloud Bills Got Embarrassing

- Platform Engineering: DevOps at Scale Without Burning Out Your Best People

- How These Four Things Actually Connect to Each Other

- Side-by-Side: What Each One Solves and What It Does Not

- Where to Start If You Are New to Any of This

- Where to Put Your Energy in 2026

DevOps Grew Up. Here Is What That Actually Looks Like.

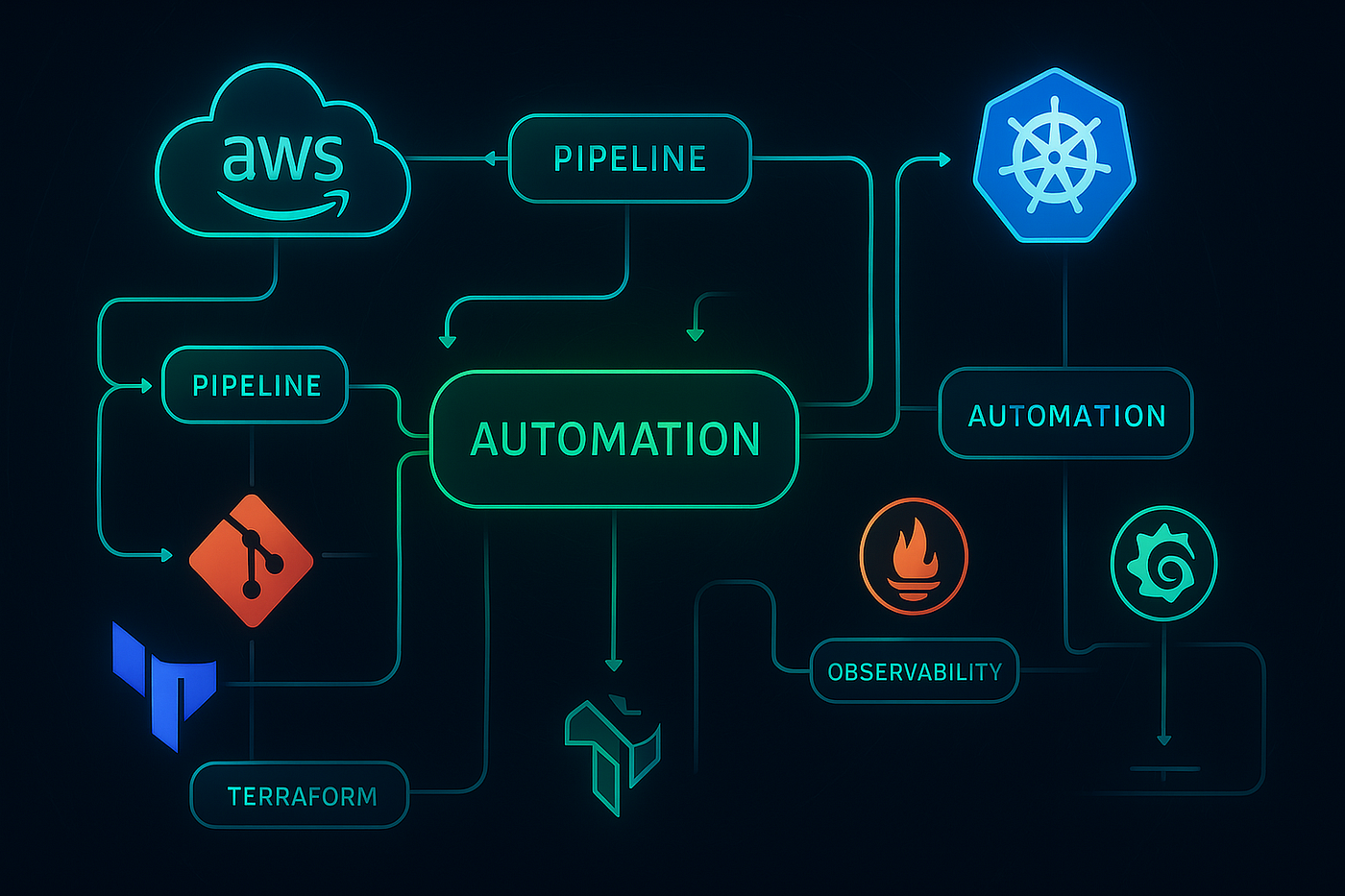

There is a version of DevOps that most people learned about five years ago. CI/CD pipelines, infrastructure as code, Docker containers, maybe Kubernetes if you were ambitious. That was the first era. It was about automating the manual steps that were slowing teams down. Deploy on Friday without dreading Monday. Stop treating servers like pets. Get code to production faster.

That era mostly worked. And then it created a new set of problems that nobody fully anticipated, because that tends to be how engineering goes.

Teams that went all-in on cloud infrastructure found their bills growing in ways that were hard to explain to finance. Companies that scaled to hundreds of microservices found that every team had a slightly different way of deploying things, and onboarding someone new meant learning twenty slightly incompatible systems. Kubernetes clusters drifted from their intended state in ways that were annoying to debug and occasionally catastrophic. The tooling that was supposed to reduce operational burden quietly created new operational burden of a different kind.

The four trends worth understanding in 2026 are not new concepts dreamed up by analyst firms looking for something to put in a quadrant. They are responses to real problems that showed up when the first era of DevOps scaled past its original assumptions. Serverless, GitOps, FinOps, and platform engineering each address a specific failure mode that became visible once enough organizations had been running cloud-native infrastructure for a few years.

Understanding them as a connected set rather than four separate things to check off a list is how you actually get value from any of them.

Serverless in 2026: The “It Depends” Answer Finally Has Clarity

Serverless had an awkward adolescence. The pitch was simple: stop managing servers entirely, pay only for the compute you actually use, and let the cloud provider handle scaling. The reality for a lot of early adopters was more complicated. Cold starts made latency unpredictable. Debugging a Lambda function felt like shouting into a void compared to SSH-ing into a server. The execution model forced architectural decisions that did not always translate cleanly from existing code. A lot of teams tried it, hit friction, and went back to containers with a note in the retrospective about revisiting later.

By 2026 most of those rough edges are genuinely smoother. Cold starts are largely addressed with provisioned concurrency on AWS and comparable features from Azure and Google. The ecosystem around serverless observability has matured to the point where tools like Lumigo, Powertools for Lambda, and OpenTelemetry integrations give you reasonable visibility into what is happening without building custom instrumentation from scratch. The execution time limits that originally made serverless feel awkward for anything beyond short API calls have been extended significantly across providers.

More importantly, the community has developed clearer intuitions about when serverless makes sense and when it does not. Event-driven processing, APIs with unpredictable traffic patterns, scheduled jobs, data transformation pipelines, webhook handlers: these are the workloads where serverless delivers on the original cost and ops reduction promise. Long-running stateful processes, latency-sensitive applications where cold start variance is unacceptable, and anything that needs persistent local storage are workloads where containers or VMs are still the right answer.

The “it depends” answer to “should we use serverless” has not gone away. What has changed is that the factors it depends on are better understood and the tooling gaps that made it frustrating have mostly been addressed. Teams that made the wrong call on serverless in 2020 because the ecosystem was immature have good reason to reconsider with current tooling in 2026.

One thing I would flag that does not get enough attention: serverless changes your cost model in ways that can surprise you in both directions. It is genuinely cheaper for bursty, low-to-moderate traffic workloads. It can be significantly more expensive than a well-sized container for high-volume steady-state processing. Run the numbers on your specific traffic pattern before assuming serverless saves money. The free tier numbers in the documentation are for getting started, not for production estimates.

GitOps: Why Git Became the Most Important Ops Tool Nobody Called an Ops Tool

GitOps is one of those ideas that sounds almost too simple when you first hear it. Everything about your system state, your application configs, your infrastructure definitions, your policies, lives in Git. A tool watches that repository and continuously reconciles the actual state of your systems to match what is committed. That is more or less the whole concept.

The reason it turns out to be powerful is not the tooling. It is the constraint. When Git is your single source of truth for system state, several things become true simultaneously that were previously hard to achieve at the same time. Every change has an author, a timestamp, a description, and a record of who reviewed it. Rolling back a bad deployment becomes a git revert rather than a frantic SSH session and a lot of manual cleanup. Drift, the phenomenon where your running infrastructure gradually diverges from your documented desired state through manual changes that nobody recorded, gets caught automatically by the reconciliation loop rather than discovered during an incident.

The CNCF’s surveys show GitOps has hit genuine maturity in organizations that are serious about Kubernetes. It went from an interesting experiment to a marker of operational maturity in about three years. That speed of adoption is partly because Argo CD and Flux, the two main GitOps tools, are stable and well-documented. But it is mostly because the problems GitOps solves, drift, auditability, consistent deployment process across environments, are problems that organizations running production Kubernetes clusters feel acutely.

One thing worth saying directly: GitOps is not magic and it does have a learning curve that gets underestimated. The reconciliation model means you have to think differently about how changes propagate. There are situations, particularly in disaster recovery scenarios, where the pull-based model of GitOps requires more thought than imperative deployments. Teams that adopt it without investing time in understanding the mental model sometimes find themselves fighting the tool.

That said, the organizations I have seen struggle most with GitOps adoption are the ones that tried to retrofit it onto existing chaotic deployment processes rather than designing the Git-first workflow from the beginning. If your current deployment process has a lot of undocumented manual steps, the right move before adopting GitOps is to document those steps, not to immediately point Argo CD at a repo and hope for the best.

FinOps: The Trend That Exists Because Cloud Bills Got Embarrassing

Here is a version of a conversation that has happened at a lot of companies in the last three years. Engineering team does a major architecture overhaul, moves workloads to cloud-native services, deploys AI model serving infrastructure, generally does the right things technically. Finance team looks at the AWS bill six months later and schedules an emergency meeting. Nobody on the engineering side had been watching costs closely enough because cost visibility was someone else’s job, or because the billing dashboards were too confusing, or because the free tier experiment became a production workload without anyone noticing the spend increase.

FinOps is the organized response to that problem. The FinOps Foundation, which publishes the most widely used framework for cloud financial management, describes the practice as bringing together finance, engineering, and business teams to operate on a shared model of cloud costs. In practice what that looks like varies a lot by company size and maturity, but the core shift is treating cost as a first-class engineering concern rather than someone else’s department.

What is specifically different about FinOps in 2026 compared to earlier approaches to cloud cost control is the integration with engineering workflows. The previous approach was a monthly finance report showing which teams spent what, delivered after the fact when nothing could easily be changed. Current FinOps tooling, from platforms like CloudHealth, Apptio, or the native cost management features in AWS and Azure, can show cost impact at the pull request level, send real-time alerts when spending patterns change unexpectedly, and recommend rightsizing changes automatically based on actual utilization data.

AI workloads specifically have added urgency to this. GPU instances are expensive in a way that CPU instances are not, and the cost profile of running inference at scale can balloon quickly if nobody is monitoring it. Teams that deployed LLM-based features in 2024 and 2025 without putting FinOps tooling in place first have sometimes found themselves with bills that were genuinely surprising.

The cultural component of FinOps gets understated in most technical writeups because it is harder to implement than adding a dashboard. Engineers have to actually care about and act on cost data, not just have access to it. That requires leadership to communicate that cost is a legitimate engineering metric alongside reliability and performance, not just a finance problem that engineering gets blamed for. The organizations that have made FinOps work are ones where that message came from engineering leadership, not from finance sending angry emails after the fact.

Platform Engineering: DevOps at Scale Without Burning Out Your Best People

Platform engineering is what happens when an organization grows large enough that the original DevOps model of “everyone owns everything end to end” starts producing inconsistency at scale. The original vision of DevOps, where developers own their pipelines, their infrastructure, their monitoring, their on-call rotations, works well at small scale. At a company with dozens of product teams, it produces a situation where every team has its own slightly different CI/CD setup, its own slightly different approach to secrets management, its own slightly different logging configuration, and the senior engineers who understand all of this spend a large fraction of their time answering the same questions repeatedly instead of building things.

Platform engineering addresses this by treating internal infrastructure as a product with internal customers. A dedicated platform team builds the golden paths: the pre-configured, opinionated, well-documented ways to deploy a service, set up monitoring, manage secrets, run database migrations, and handle the other tasks that every product team needs to do but does not want to reinvent from scratch. Product teams get self-service tools and clear documentation. The platform team owns the shared infrastructure and iterates on it like a product, gathering feedback from internal users.

Backstage, the open-source developer portal originally built by Spotify and donated to the CNCF, has become the most common foundation for internal developer platforms. It provides a unified interface for service catalogs, documentation, deployment status, and plugin integrations with the rest of your toolchain. It is not a lightweight thing to set up and maintain, which is a real consideration, but for organizations above a certain size the productivity return on investment is measurable.

The honest limitation of platform engineering that often gets glossed over: it only works if the platform team is adequately resourced and genuinely treats internal developers as customers. An underfunded platform team that is also responsible for production incidents and is not given time to iterate on developer experience produces a platform that nobody wants to use, and then you have the worst of both worlds: the overhead of maintaining the platform without the productivity benefit. The platform team burnout problem is real and shows up in organizations that added platform engineering as a label to an existing overloaded ops team without adding headcount or changing priorities.

How These Four Things Actually Connect to Each Other

These four trends are often presented as separate items on a list of things to adopt, and that framing misses something important about how they relate. They are not four independent things to check off. They are four facets of the same shift in how engineering organizations think about operations at scale.

GitOps is the foundation that everything else builds on cleanly. When your infrastructure and application configuration live in Git and get applied by a reconciliation loop, it becomes natural to add FinOps cost annotations to your infrastructure definitions so cost impact is visible at review time. It becomes natural to build your platform team’s golden paths as Git-managed templates rather than verbal guidelines. Serverless functions declared in code and deployed through GitOps pipelines get the same auditability and rollback capability as containerized workloads.

FinOps works better when deployments are GitOps-driven because you have a clear record of which change caused a spending spike. Platform engineering is the thing that makes FinOps tooling accessible to product teams who do not want to learn cloud billing interfaces. Serverless adoption fits naturally into a platform engineering model because the platform team can provide opinionated templates for common serverless patterns, preventing the proliferation of different Lambda architectures across teams.

You do not need to adopt all four simultaneously to see value. But understanding the connections between them changes how you sequence adoption. Starting with GitOps and building outward is usually the approach that creates the cleanest foundation for the others.

Side-by-Side: What Each One Solves and What It Does Not

| Trend | The Problem It Actually Solves | What It Does Not Fix | Realistic Timeline to Value | Where Most Teams Go Wrong |

|---|---|---|---|---|

| Serverless | Ops overhead and cost unpredictability on bursty, event-driven workloads | High-volume steady-state compute, latency-sensitive services, anything needing persistent local state | Days to first working deployment, weeks to production confidence | Assuming it is always cheaper than containers without running actual cost comparisons |

| GitOps | Config drift, deployment inconsistency, lack of auditability across environments | Poorly designed architecture, badly written code, teams that do not review changes properly | Weeks to initial setup, months to full organizational adoption | Retrofitting it onto undocumented chaotic existing processes instead of designing Git-first from the start |

| FinOps | Cloud spending surprises, lack of cost visibility at team and service level, waste from over-provisioned resources | Architectural decisions that are fundamentally expensive, negotiating better cloud contracts (that is procurement) | Weeks to initial visibility, months to cultural change that actually affects behavior | Treating it as a finance reporting exercise rather than an engineering practice change |

| Platform Engineering | Inconsistent tooling across teams, repeated reinvention of the same infrastructure patterns, senior engineers doing repetitive support work | Bad product decisions, team communication problems, poor engineering fundamentals at the individual level | Months to a useful initial platform, a year or more to broad internal adoption | Underresourcing the platform team and expecting them to also cover production incidents |

Where to Start If You Are New to Any of This

The right starting point depends heavily on where you are and what specific pain you are feeling, not on a ranking of which trend is theoretically most important.

If deployments at your organization are inconsistent across environments, if you regularly deal with “works in staging, broken in prod” situations, or if nobody can easily answer the question “what changed in the last deployment,” GitOps should be your first focus. The investment required to set up Argo CD or Flux on a Kubernetes cluster is lower than most teams expect, and the benefit of having Git be the authoritative record of system state shows up quickly in the first time you need to debug an incident or roll back a bad release.

For getting started practically: set up a local Kubernetes cluster with minikube or kind, install Argo CD using the official documentation, and try deploying a simple application from a Git repository. The experience of watching Argo CD detect a change in your repo and apply it to the cluster, then watching it detect and correct manual drift when you change something directly, is a better explanation of why GitOps matters than any amount of reading about it.

If cloud cost surprises are your most acute problem, enable cost allocation tags on every resource in your cloud account first. This is the foundation that makes FinOps analysis possible and it has zero architectural impact. Then set up billing alerts at thresholds that would catch unexpected spending before the end of the month. Then look at your last three months of bills and find the five services consuming the most spend. Usually two or three of those have obvious rightsizing or cleanup opportunities. Those quick wins tend to pay for the time investment of building the FinOps practice.

For serverless, the fastest meaningful learning comes from building something real rather than following a tutorial. Deploy an actual API or data processing job you need rather than a hello world. The specific friction points you hit, cold start behavior, logging setup, local testing workflow, are much easier to evaluate when they are affecting something you care about rather than a demo app.

Platform engineering is the one where I would caution against starting too early. Building an internal developer platform before your organization has enough teams that inconsistency between them is a real problem is a good way to build something nobody uses. The right trigger is usually when you have five or more product teams and people are visibly spending time solving the same infrastructure problems in slightly different ways. At that point the ROI calculation for a platform team changes meaningfully.

Where to Put Your Energy in 2026

The most important shift in DevOps thinking over the last few years is not any specific tool or methodology. It is the recognition that operational concerns, cost, security, reliability, developer experience, are not afterthoughts to be handled by a separate ops team after software is built. They are design constraints that shape architecture decisions from the beginning.

GitOps and platform engineering are both expressions of that shift applied to how software gets deployed and managed. FinOps is that shift applied to how cost gets considered. Serverless is that shift applied to how compute gets provisioned. They all point in the same direction: operations becomes something that is built into how the team works rather than bolted on after the fact.

For someone at an early stage in their career, the most useful thing you can do is build one project end-to-end with GitOps as the deployment mechanism and serverless for at least one component. Not because you will necessarily use those specific tools in your first job, but because the mental model of infrastructure as code with Git as the source of truth is the foundation that everything else in modern cloud operations builds on. Getting that model in your head through hands-on experience is more valuable than any certification.

For someone already in a team dealing with one of the specific problems described above: the shortest path to solving it is usually starting with a single service or a single team rather than trying to transform how the whole organization works simultaneously. GitOps for one critical service, FinOps visibility for one expensive team, platform engineering for one golden path that multiple teams need. Demonstrating that it works at small scale is almost always the prerequisite for convincing the rest of the organization to adopt it.

What are you currently dealing with at your organization or in your own projects? Specifically curious whether anyone is running GitOps in production and has thoughts on Argo CD versus Flux, or whether anyone has done a FinOps audit that produced surprising results. Those specifics in the comments are more useful to other readers than general takes.

References (March 2026):

CNCF Annual Survey 2025 (GitOps maturity, Kubernetes adoption): cncf.io/reports

FinOps Foundation State of FinOps 2026: data.finops.org

Argo CD documentation and architecture overview: argo-cd.readthedocs.io

Backstage (CNCF): backstage.io

AWS Lambda cold start documentation and provisioned concurrency: aws.amazon.com/lambda

Platform Engineering community and resources: platformengineering.org

The teams shipping the most reliable software in 2026 are not the ones with the most tools.

They are the ones who figured out which problems they were actually trying to solve.

Leave a Reply