Table of Contents

- This Is Not the Ethernet You Learned About in Class

- The Numbers That Explain Why Everyone Is Suddenly Paying Attention

- The Problem Ethernet Was Not Built to Solve

- How a Group of Competitors Agreed to Build Something Together

- What UEC 1.0 Actually Specifies

- Ethernet vs NVLink vs InfiniBand: The Real Comparison

- Broadcom’s Thor Ultra: The First 800G Ethernet NIC Built for AI

- The 100,000-GPU Test: How Ethernet Already Proved Itself at Scale

- What Is Coming in Late 2026: 1.6 Terabit Ethernet

- Why This Matters Beyond the Data Center

This Is Not the Ethernet You Learned About in Class

Ethernet is fifty years old. Bob Metcalfe invented it at Xerox PARC in 1973. It has been through more generational upgrades than almost any other networking technology in existence: 10 Mbps to 100 Mbps to 1 Gbps to 10 Gbps to 100 Gbps, each time staying close enough to the original standard that existing cables and infrastructure could keep working. That backward compatibility is part of why it never died. Every time something faster came along and threatened to replace it, Ethernet just upgraded and survived.

In 2026 Ethernet is doing something it has not done before: it is going on offense. Not just surviving competition from proprietary interconnects, but actively taking market share from them, including from Nvidia’s most strategically important product lock-in inside AI data centers. The Ethernet switch market in data centers grew 62 percent year over year in Q3 2025 according to IDC, driven almost entirely by AI workload demand. 800 GbE switches specifically surged 91.6 percent in a single quarter. That is not incremental growth. That is a technology reorientation happening in real time.

Understanding why requires understanding what changed in Ethernet’s design, who changed it, and why the organizations that have the most to lose from Ethernet winning, specifically Nvidia, ended up joining the effort anyway.

The Numbers That Explain Why Everyone Is Suddenly Paying Attention

The data center networking market was valued at approximately $46 billion in 2025. BCC Research projects it will reach $103 billion by 2030, a compound annual growth rate of nearly 18 percent. That growth is almost entirely driven by AI infrastructure buildout. Training a frontier AI model requires clusters of tens of thousands of GPUs or accelerators that need to communicate with each other constantly and at high bandwidth. The networking fabric connecting those GPUs is not a peripheral concern. It is a critical performance bottleneck that can determine whether a training job completes in three weeks or four, which at the cost of GPU-hours translates directly into tens of millions of dollars.

IDC’s Q3 2025 Ethernet switch numbers make the direction of the market explicit. The 62 percent year-over-year growth in the data center portion of the market tracks closely with the acceleration of AI infrastructure investment. The 91.6 percent sequential surge in 800 GbE switches specifically represents the highest-speed tier that enterprises were deploying at scale. Revenue for 200/400 GbE switches was up 97.8 percent year over year in the same period. Every speed tier above commodity enterprise Ethernet was growing at rates that infrastructure analysts described as extraordinary even by AI boom standards.

The market signal is clear: hyperscalers, neoclouds, and enterprises building AI infrastructure are choosing Ethernet as the interconnect fabric for scale-out networking, the rack-to-rack and cluster-to-cluster connectivity that ties large AI systems together. The question worth digging into is why, given that Ethernet was not originally designed for this and proprietary alternatives exist that were built specifically for it.

The growth in numbers: Data center Ethernet switch market up 62% year-over-year in Q3 2025 (IDC). 800GbE switches up 91.6% sequentially. 200/400GbE revenue up 97.8% year-over-year. Data center networking market: $46 billion in 2025, projected $103 billion by 2030. These are not projections about future AI demand. They are shipment and revenue numbers from hardware that already shipped.

The Problem Ethernet Was Not Built to Solve

Traditional Ethernet was designed for general-purpose networking where traffic patterns are unpredictable, packet loss is acceptable, and the network needs to handle thousands of different applications with different requirements simultaneously. TCP/IP, the protocol stack built on top of Ethernet, handles packet loss by detecting it, requesting retransmission, and slowing down the sender to avoid congesting the network further. That works well for web traffic, file transfers, email, and most enterprise applications where the latency of retransmission, typically measured in tens of milliseconds, is invisible to the user.

AI training workloads are fundamentally different. A training run across thousands of GPUs involves collective operations where every GPU in the cluster needs to exchange gradient updates with every other GPU in a coordinated synchronization step. This process is called AllReduce, and it happens hundreds or thousands of times during a training run. The entire cluster effectively pauses and waits for this exchange to complete before proceeding to the next batch. If the network introduces latency or packet loss that causes retransmissions, every GPU sits idle waiting for the stragglers. At a cluster of 10,000 GPUs costing hundreds of dollars per GPU-hour, idle time during training is extraordinarily expensive.

InfiniBand was the traditional answer to this problem. It provides lossless, low-latency fabric connectivity specifically designed for high-performance computing workloads. RDMA over InfiniBand, which allows GPUs to directly access each other’s memory without involving the CPU, minimizes latency and CPU overhead during collective operations. The performance advantage was real, which is why InfiniBand dominated HPC clusters for decades.

The catch is vendor lock-in. InfiniBand is primarily an Nvidia technology following its acquisition of Mellanox in 2020. Building an AI cluster on InfiniBand means buying Nvidia’s switches, Nvidia’s network adapters, and Nvidia’s management software. That is a comfortable position for Nvidia and an uncomfortable one for everyone else. Ethernet’s openness, the fact that hundreds of companies make interoperable Ethernet switches and NICs competing on price, is the reason the industry started looking seriously at making Ethernet good enough to replace InfiniBand rather than accepting permanent dependence on a single vendor.

How a Group of Competitors Agreed to Build Something Together

In July 2023, a group of companies that compete with each other across nearly every dimension of the networking and semiconductor markets sat down and agreed to build something collaboratively. AMD, Arista, Broadcom, Cisco, HPE, Intel, Meta, and Microsoft founded the Ultra Ethernet Consortium under the Linux Foundation. The explicit goal was to make Ethernet capable of matching or exceeding InfiniBand performance for AI and high-performance computing workloads.

The fact that these companies agreed to collaborate is itself worth understanding. AMD and Intel are direct CPU competitors. Broadcom and Intel both make network interface cards that compete with each other. Arista, Cisco, and Broadcom all sell Ethernet switching equipment into the same data center customers. Meta and Microsoft are hyperscalers that spend billions on the infrastructure these companies sell and have strong incentive to commoditize it. What they share is the interest in not having the AI networking market controlled by a single vendor.

Notably, Nvidia also eventually joined the UEC as a member. The company that sells InfiniBand joined the consortium working to make Ethernet good enough to compete with InfiniBand. That is either a hedge, a strategic intelligence operation, or a genuine acknowledgment that the industry was going to standardize on open Ethernet regardless of Nvidia’s preferences. Probably some combination of all three.

The consortium grew faster than anyone involved initially expected. By the end of 2024 it had over 120 member companies and more than 1,500 active participants, making it the fastest-growing project in the Linux Foundation’s history. The speed of growth reflects how broadly the industry wanted an alternative to proprietary AI networking.

What UEC 1.0 Actually Specifies

The UEC released its 1.0 specification in June 2025, updated to 1.0.1 in September 2025. The specification is 562 pages and covers the entire networking stack from physical layer to application interface. What it does at a conceptual level is worth understanding even if you will never read the full document.

The central design problem UEC had to solve is that traditional Ethernet assumes packets arrive in order. TCP/IP is built on this assumption. When packets arrive out of order, which happens whenever packets take different routes through a network, the receiver has to buffer and reorder them before passing them up the stack. That buffering adds latency and memory overhead. For AI training collectives where thousands of GPUs are exchanging data simultaneously, this overhead compounds into meaningful performance degradation.

Ultra Ethernet Transport, the new protocol layer at the core of UEC 1.0, is specifically designed for out-of-order packet delivery. It removes the assumption that packets need to arrive in sequence. Packets can take any available path through the network, arrive in whatever order the network delivers them, and the protocol handles the reordering efficiently at the hardware level. This allows the network to fully utilize all available paths simultaneously, a technique called packet-level multipathing, rather than dedicating a single path to each flow and leaving other paths idle.

The congestion control model is also fundamentally different from traditional Ethernet. Rather than relying on a lossless network, which requires all switches to hold large buffers to prevent any packet from being dropped, UEC implements a receiver-driven model where receivers actively limit sender transmissions based on available capacity. J Metz, chair of the UEC steering committee, described this to Network World as “critical for AI workloads” because it allows construction of larger networks with better efficiency without requiring every switch in the fabric to maintain extensive buffer state.

One design constraint UEC imposed on itself from the beginning deserves credit: the specification was written to work with existing Ethernet switches. You do not need to rip out your current switching infrastructure to start deploying Ultra Ethernet-capable NICs. The new transport protocol runs over standard Ethernet and IP, which means the installed base of data center switching can be retained while upgrading only the endpoints. That backward compatibility distinguishes Ultra Ethernet from proprietary alternatives that require specialized switch hardware from a single vendor.

Ethernet vs NVLink vs InfiniBand: The Real Comparison

These three interconnect technologies serve related but distinct purposes in AI data centers, and conflating them produces a lot of confused coverage. The distinction that matters is between scale-up networking and scale-out networking.

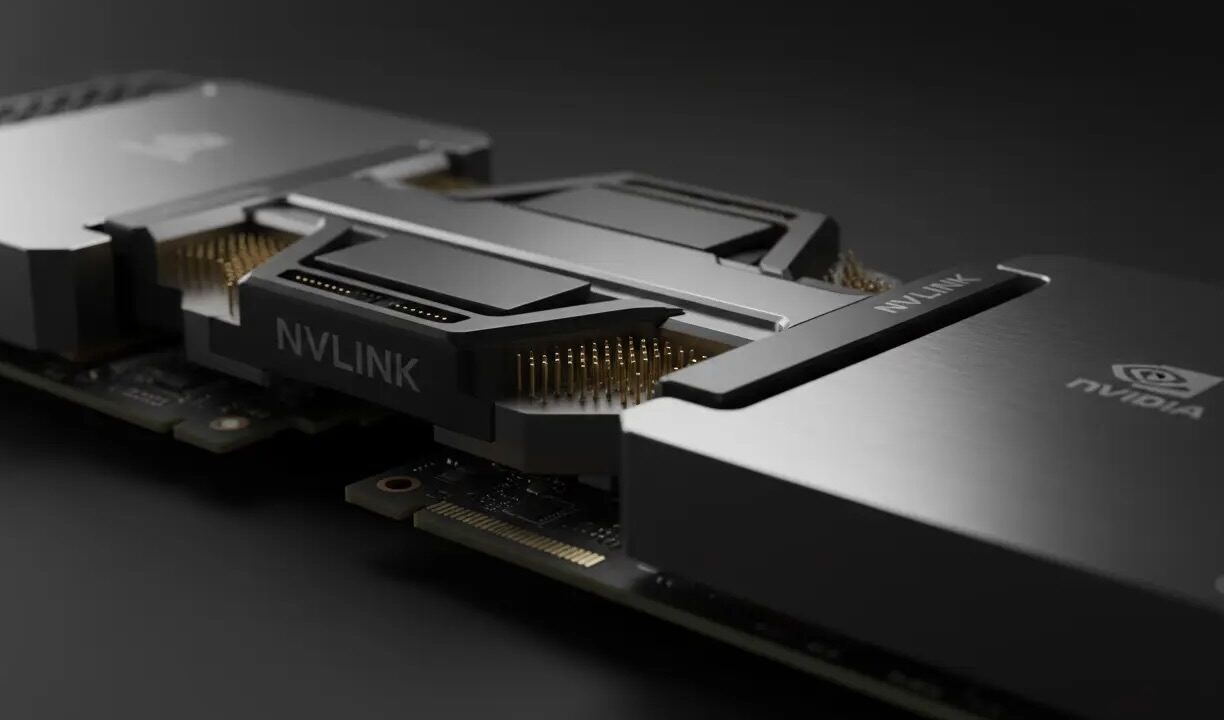

Scale-up networking connects GPUs within a single rack or system. This is where the most intensive collective operations happen, where GPUs need to access each other’s memory directly with the lowest possible latency. NVLink is Nvidia’s scale-up technology. It connects GPUs within a single NVLink domain, typically 72 to 256 GPUs, with extremely high bandwidth and very low latency. NVLink 6, which ships with the Vera Rubin platform, runs at 18 TB/s of bandwidth within a connected system. That performance is genuinely exceptional and represents years of Nvidia’s proprietary engineering investment. Ultra Ethernet does not directly compete with NVLink for within-rack scale-up connectivity at this tier.

Scale-out networking connects racks to each other, creating clusters of thousands or tens of thousands of GPUs that need to work together as a single training system. This is where Ethernet competes. The question for a hyperscaler building a 100,000-GPU cluster is what connects the 1,400 or so racks of 72 GPUs each into a single coherent fabric. InfiniBand and Ethernet are both answers to that question, and it is this scale-out tier where Ultra Ethernet is most directly relevant.

| Dimension | NVLink (Scale-Up) | InfiniBand (Scale-Out) | Ultra Ethernet (Scale-Out) |

|---|---|---|---|

| Primary use case | Within-rack GPU-to-GPU memory access | Rack-to-rack AI cluster fabric | Rack-to-rack AI cluster fabric |

| Vendor model | Nvidia proprietary, locked ecosystem | Primarily Nvidia (post-Mellanox), some alternatives | Open standard, 120+ vendors, multi-vendor NICs and switches |

| Bandwidth (current top tier) | 18 TB/s (NVLink 6, Vera Rubin) | Up to 400 Gbps per port (NDR) | 800 Gbps per port (Thor Ultra) |

| Works with existing switches? | No, requires NVLink Switch chips | No, requires InfiniBand switches | Yes, compatible with existing Ethernet fabric |

| Power consumption (NIC) | N/A (on-chip) | 125 to 150W (BlueField 3 DPU) | ~50W (Broadcom Thor Ultra) |

| Proven scale | Up to 576 GPUs per NVLink domain | Hundreds of thousands of nodes (HPC) | 100,000 GPU nodes (xAI Colossus on Spectrum-X) |

| Packet ordering model | In-order | In-order (lossless fabric required) | Out-of-order by design, no lossless fabric required |

Broadcom’s Thor Ultra: The First 800G Ethernet NIC Built for AI

In October 2025, Broadcom announced Thor Ultra, the industry’s first 800G network interface card fully compliant with UEC 1.0 specification. The announcement mattered not just for the product itself but for what it represented: the specification had been published in June and a major semiconductor company had a fully compliant product sampling within four months. That turnaround time is fast for silicon development and suggests Broadcom had been working on this in parallel with the specification process.

Thor Ultra targets scale-out networking specifically. Broadcom was explicit about this in its technical documentation: “When you need to get out of that rack and you need to connect multiple racks together, you need to scale out. This is where this NIC gets used.” The NIC ships in two configurations, one with eight 100G lanes and one with four 200G lanes, both delivering 800G aggregate bandwidth through 16 lanes of PCIe Gen 6.

The power consumption difference compared to Nvidia’s equivalent product deserves specific attention. Thor Ultra consumes approximately 50 watts. Nvidia’s BlueField 3 DPU, the nearest competitive product, consumes 125 to 150 watts. That is a 2.5x to 3x power difference per NIC. In a data center deploying thousands of network interface cards, that power gap compounds into a meaningful operational cost difference. At current electricity costs for large data centers and at the scale of 100,000-GPU clusters, the cumulative power saving from choosing a more efficient NIC runs into millions of dollars per year. The reason for the difference is architectural: DPUs include ARM processor cores, large memory subsystems, and extensive acceleration for storage and security offload functions beyond just networking. Thor Ultra focuses exclusively on AI scale-out networking and does not carry that overhead.

Arista Networks partnered with Broadcom on the Thor Ultra launch, with Arista’s 800G Etherlink switch family completing the end-to-end fabric. Accton, a major ODM manufacturer that builds switches for hyperscalers, also issued support statements. An end-to-end 800G UEC-compliant Ethernet fabric from NICs through switches is commercially available now, not on a future roadmap.

The 100,000-GPU Test: How Ethernet Already Proved Itself at Scale

Before Ultra Ethernet had a 1.0 specification, before Broadcom had a 800G UEC-compliant NIC, Ethernet had already been used to connect the largest AI cluster ever built. The xAI Colossus system in Memphis, Tennessee, used Nvidia Spectrum-X Ethernet to connect 100,000 GPUs into a single training fabric. Gilad Shainer, vice president of networking at Nvidia, described Spectrum-X as “the only Ethernet infrastructure, not InfiniBand, that has managed to reach that scale by running single-job workloads across the entire system.”

That last qualification, single-job workloads across the entire system, is the key performance requirement for AI training at scale. Running a single distributed training job that efficiently utilizes every GPU in a 100,000-GPU cluster without the collective operations becoming the bottleneck is a different challenge from running thousands of smaller independent jobs on the same hardware. The Colossus deployment confirmed that Ethernet-based fabric could meet that requirement at a scale that had never been attempted before.

Spectrum-X is Nvidia’s own Ethernet product rather than a UEC-compliant open standard implementation. But its success at Colossus did two important things for the broader Ethernet ecosystem. It demonstrated at unprecedented scale that Ethernet could work for the hardest AI workloads. And it gave the UEC consortium a concrete performance target to aim for, with the credibility of a real deployment rather than theoretical benchmarks.

AMD’s Pensando Pollara 400, a UEC-compliant NIC rather than a proprietary Nvidia product, made its first commercial deployment at Oracle Cloud Infrastructure. AMD describes it as capable of interconnecting scale-out environments containing up to one million GPUs, claiming 10 percent higher RDMA performance compared to existing Ethernet solutions. The Oracle deployment represents the first production use of a fully UEC-compliant product, moving the specification from paper to running infrastructure.

What Is Coming in Late 2026: 1.6 Terabit Ethernet

The UEC specification and the current 800GbE products are not the ceiling of where Ethernet is going in AI networking. The IEEE 802.3dj standard, which is currently on track for completion in late 2026, defines Ethernet at 200G, 400G, 800G, and 1.6 Terabit speeds using 200G per lane SerDes technology. Early 200G-per-lane products are expected to reach the market during 2026 even before the standard is formally completed.

1.6 Terabit per port Ethernet is a bandwidth level that would have seemed absurd in any networking context five years ago. At 1.6 Tbps per port with 64-port switches, a single switch provides over 100 Tbps of switching capacity. A network fabric built from these switches connecting thousands of GPUs would deliver a raw data movement capability that changes the economics of both AI training and inference in ways that current architectures do not fully account for.

Peter Jones, chair of the Ethernet Alliance, confirmed to Network World that even as 800G and 200G-per-lane work is near completion, the community is “already preparing for the next step: initiating a 400G-per-lane project to address the demands of the hyperscalers for AI networking.” The roadmap does not stop at 1.6 Terabit. It treats that as a milestone on the way to something higher.

The UEC itself has three specific technical priorities for 2026 that will appear in future specification revisions. Programmable Congestion Management will allow anyone to implement custom congestion control algorithms using a standard language that works on any compliant NIC, providing flexibility for hyperscalers that want to optimize for their specific workload patterns. Congestion Signaling standardizes a mechanism for packets to carry high-fidelity information about network congestion, enabling more accurate and faster responses to changing conditions. In-Network Collectives will move the reduction operations common to AI training, the AllReduce operations that are typically the main bottleneck in distributed training, from the host GPUs into the network fabric itself, potentially eliminating the biggest single performance constraint in large-scale training.

Why This Matters Beyond the Data Center

The Ultra Ethernet story is a data center infrastructure story at the technical level. At the industry level it is something more significant: a coordinated effort by most of the networking and semiconductor industry to prevent any single vendor from controlling the connectivity fabric of AI compute at the scale AI compute is being built.

Nvidia’s NVLink dominance within individual racks is secure for the foreseeable future. The performance advantage at the scale-up layer is real and not easily replicated by an open standard running on commodity hardware. But the scale-out layer, the fabric that connects the thousands of racks in a hyperscale AI cluster, is where Ethernet is winning. The IDC numbers confirm it is already happening. The UEC specification gives it a standards-based foundation. The Broadcom Thor Ultra and AMD Pensando Pollara give it production hardware. The xAI Colossus proves it works at 100,000 nodes.

For anyone building or thinking about AI infrastructure at any scale, the practical implication is that the era of InfiniBand being the only credible choice for serious AI networking is over. Ultra Ethernet offers multi-vendor optionality, backward compatibility with existing switching infrastructure, lower power consumption per NIC, and a public specification that hundreds of companies are actively implementing. The performance gap that InfiniBand once held has largely closed for scale-out workloads, and the cost and vendor flexibility advantages of Ethernet are real.

There is also a longer-term implication worth noting. The AI networking market growing from $46 billion to a projected $103 billion by 2030 is one of the larger infrastructure investment cycles in the technology industry’s history. An open standard that prevents any single vendor from capturing that market creates different economic dynamics than a proprietary solution that does. The UEC’s 120-plus member companies all have an interest in the market growing without any single entity extracting an outsized share of it through lock-in. That alignment of incentives is part of why the consortium reached 1,500 active participants faster than any comparable Linux Foundation project.

What kind of networking infrastructure are you working with right now? Specifically curious whether anyone is running InfiniBand deployments that they are evaluating moving to Ethernet, or whether you are building new AI infrastructure and making the choice between them for the first time. The practical decision factors look different depending on whether you are migrating or starting fresh, and that is where the real tradeoffs live.

References (March 2026):

IDC: Data center Ethernet market Q3 2025 (62% YoY growth, 800GbE 91.6% surge): NetworkWorld, January 7, 2026 networkworld.com

UEC 1.0 specification launch (June 11, 2025): ultraethernet.org

Broadcom Thor Ultra 800G UEC-compliant NIC announcement (October 14, 2025): broadcom.com

NetworkWorld analysis of Thor Ultra (Hasan Siraj, Broadcom): networkworld.com

HPCwire: Ultra Ethernet and xAI Colossus 100,000-node deployment: hpcwire.com

UEC founding members and Linux Foundation record growth: Data Center Dynamics, HPE Community

IEEE 802.3dj standard (1.6 Tbps, 200G/lane): NetworkWorld, January 7, 2026

BCC Research: Data center networking market $46B (2025) to $103B (2030): NetworkWorld, February 4, 2026

AMD Pensando Pollara 400 Oracle Cloud deployment: Data Center Dynamics

Ethernet has been pronounced dead by faster, more specialized alternatives roughly once per decade since 1985.

In 2026 it is connecting 100,000-GPU AI clusters and its market just grew 62 percent in a single quarter.

Leave a Reply