Sources: Sensor Tower (uninstall data, via TechCrunch and Times of India), quitgpt.org, Fortune, Axios, Business Standard, eWeek, The Guardian, 9to5Mac, TechCrunch, Apptopia, ALM Corp. Events occurred February 26 to March 9, 2026. Boycott figures are campaign estimates from quitgpt.org and include pledges, cancellations, and social shares, not independently verified hard uninstall counts.

Table of Contents

- The Morning Sam Altman Said One Thing and Did Another

- How a Venezuela Operation Started the Whole Thing

- The Two Things Anthropic Refused to Remove From Its Contract

- The App Store Data: What the Numbers Actually Show

- The QuitGPT Boycott: What the Campaign Claims and What Is Verified

- Trump Banned Anthropic. The App Store Put It at Number One.

- Sam Altman’s Response and Why the Critics Were Not Satisfied

- Where Everything Stands as of March 20

- What This Actually Tells Us About the AI Industry in 2026

- The Question Nobody Has Answered Yet

The Morning Sam Altman Said One Thing and Did Another

On the morning of February 27, 2026, Sam Altman posted publicly stating that he shared Anthropic CEO Dario Amodei’s position on restricting military uses of AI. He was specific: he agreed that AI should not be used for autonomous lethal weapons or mass surveillance of American citizens. This aligned OpenAI with the position Anthropic had been defending under intense government pressure for weeks.

By 5:01 that afternoon, the Pentagon’s deadline for Anthropic to accept new contract terms had passed without an agreement. By evening, several things happened in quick succession. President Trump posted on Truth Social ordering every federal agency to immediately stop using Anthropic products. Defense Secretary Pete Hegseth designated Anthropic a supply chain risk. And OpenAI announced it had signed a deal with the Department of Defense, accepting terms Anthropic had refused.

The juxtaposition was visible and immediate. Altman later acknowledged to CNBC that the rollout looked “opportunistic and sloppy.” That was probably the most accurate public statement anyone made about the week that followed.

How a Venezuela Operation Started the Whole Thing

The public drama of late February was the surface of a dispute that had been building since July 2025. That month, Anthropic signed a $200 million contract to integrate Claude into classified military networks. The contract included specific restrictions Anthropic had insisted on: Claude could not be used for autonomous weapons systems and could not be used for mass surveillance of American citizens. The Pentagon agreed to those terms at the time.

In January 2026, reports emerged that military planners had used Claude to help plan an operation that included capturing Venezuelan President Nicolas Maduro. Anthropic raised concerns internally about whether this fell outside what had been agreed. The Pentagon’s position was that the operation was lawful, which was all the contract required. Anthropic’s position was that the spirit of the agreement was being stretched.

Defense Secretary Hegseth had issued an AI strategy memo in January 2026 requiring that all Department of Defense AI contracts include “any lawful purpose” language, meaning the AI could be used for any purpose the government deemed legal, with no carve-outs. Anthropic’s existing contract did not include this language. The Pentagon wanted it added. Anthropic refused. By February 24, Hegseth had issued a formal deadline. On February 26, Anthropic’s board evaluated the Pentagon’s final revised contract language and concluded it was still insufficient. The deadline passed and Anthropic walked away.

The two specific things Anthropic refused: Allowing Claude to be used for fully autonomous weapons systems where the AI makes lethal targeting decisions without human authorization. Allowing Claude to be used for mass surveillance of American citizens. Anthropic’s position: these are absolute restrictions, not subject to negotiation regardless of whether specific applications are technically lawful under current policy.

The Two Things Anthropic Refused to Remove From Its Contract

Understanding why Anthropic did not simply accept the Pentagon’s terms requires understanding what those terms actually meant. The “any lawful purpose” requirement was not an abstract policy debate. Autonomous weapons and mass domestic surveillance are both, under current US law and executive interpretation, potentially lawful. The Pentagon was asking Anthropic to remove the provisions that specifically excluded those uses.

Dario Amodei responded publicly with a statement that was direct: “I cannot in good conscience accede to the Pentagon’s request.” He continued that “Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner. However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values.”

Anthropic was not saying it opposes all military use of AI. It had been operating a military contract for seven months. It was specifically drawing lines at autonomous lethal weapons and mass surveillance of its own country’s citizens. Anthropic vowed to challenge the supply chain designation in court. Within days, more than 30 industry staffers filed an amicus brief supporting the legal challenge. That lawsuit remains active as of March 20.

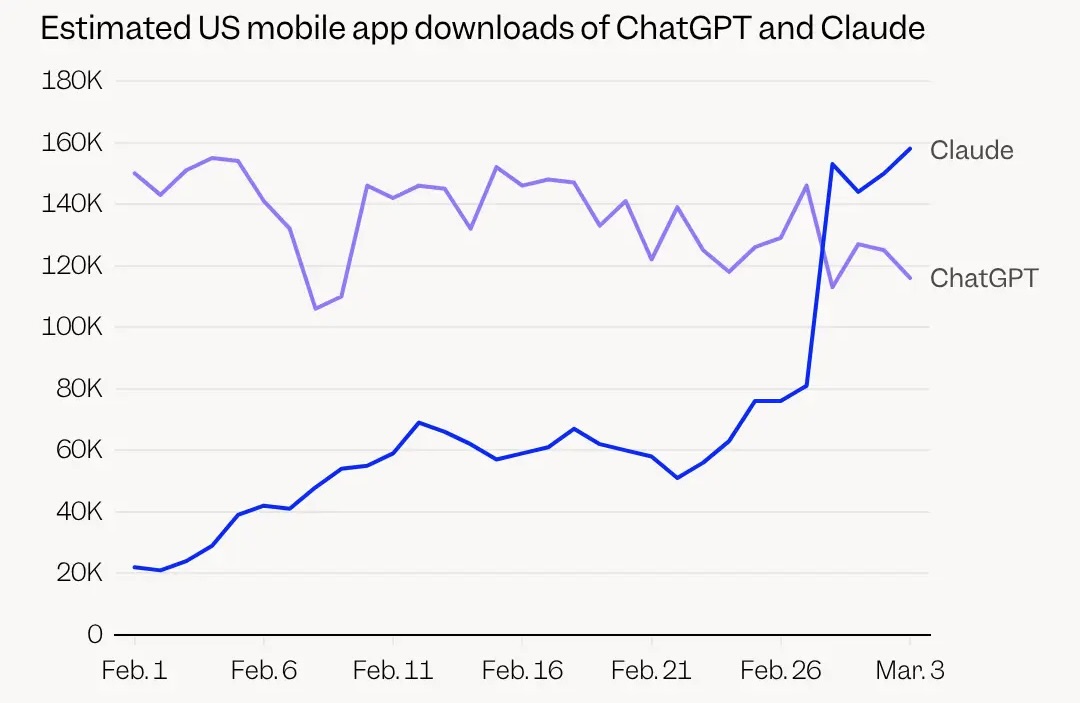

The App Store Data: What the Numbers Actually Show

The consumer response began on February 28, the day after the OpenAI deal became public, and it is worth being precise about what the data shows and what it does not.

Sensor Tower, whose data was cited by TechCrunch, Times of India, and other outlets, reported that US ChatGPT uninstalls surged 295 percent day-over-day on February 28 compared to the normal daily rate. That is a significant spike in behavior. What Sensor Tower did not report was an absolute number of millions of actual uninstalls in one weekend, and no independent data source has published a verified count of that kind. The percentage spike is real and notable. Headlines translating that percentage into millions of specific uninstalls are extrapolating beyond what the data shows.

On the download side, the data is similarly specific. Claude downloads jumped 37 percent on Friday and 51 percent on Saturday according to Sensor Tower, with Appfigures reporting an even higher 88 percent day-over-day surge on Saturday. By March 1, Claude reached the number one position in the US Apple App Store, displacing ChatGPT for the first time. ChatGPT reclaimed that position by March 9 according to 9to5Mac.

One-star reviews for ChatGPT jumped 775 percent on Saturday and then doubled again on Sunday, while five-star ratings fell 50 percent simultaneously. Those review numbers reflect real user sentiment regardless of whether every reviewer also uninstalled the app. Anthropic made its memory import feature free during this period, allowing users to transfer ChatGPT conversation history directly into Claude, which reduced the friction of switching at exactly the moment many users were motivated to try.

Anthropic’s broader growth context matters here. Free active users on Claude increased by over 60 percent since January 2026. Daily signups quadrupled. Paid subscribers doubled. The controversy accelerated a trend that was already underway rather than creating it from nothing.

The QuitGPT Boycott: What the Campaign Claims and What Is Verified

The boycott organizing happened faster than anyone expected. The Instagram account quitGPT gained 10,000 followers almost immediately. A Reddit thread urging users to cancel ChatGPT crossed 30,000 upvotes. Rutger Bregman published an op-ed in The Guardian urging readers to cancel. Mark Ruffalo, Katy Perry, and other celebrities amplified the campaign on social media.

The campaign’s own numbers require careful reading. Forbes reported over 1.5 million claimed actions by March 2, citing quitgpt.org. By mid-March, organizers were citing 2.5 million people who had “canceled subscriptions, pledged to stop using, or shared boycott news on social media.” That “or” is doing significant work in the figure: the number combines confirmed cancellations with pledges and social shares, none of which are independently verifiable from the outside. As of March 20, quitgpt.org claims 4 million-plus people have “taken action,” with the site describing its methodology as a combination of site signatures, social shares, and “credible app usage data.”

The honest summary is that a real and significant boycott happened, the app uninstall data confirms unusual behavior, and the campaign has generated numbers in the millions by its own count using a methodology that mixes different types of engagement. What cannot be independently confirmed is the exact count of actual paid subscription cancellations or permanent app deletions. That distinction matters for assessing OpenAI’s financial impact, even if it does not change the political and reputational significance of the response.

For context on OpenAI’s position: the company has over 300 million weekly active users and annualized revenue above $25 billion. Even a significant user churn event measured in the hundreds of thousands of paid subscribers would be financially meaningful but not existential. The more consequential question is whether the technically sophisticated, AI-advocate users disproportionately represented in the boycott demographics stay gone, because their influence on where others go is larger than their raw numbers suggest.

Trump Banned Anthropic. The App Store Put It at Number One.

The political dimension of the week is worth stating plainly. President Trump posted on Truth Social directing every federal agency to cease using products from Anthropic, calling the company a “radical left, woke company.” Defense Secretary Hegseth designated Anthropic a supply chain risk the same evening OpenAI signed its deal.

The consumer response to that sequence is one of the more counterintuitive data points of the month. The government banned a company and the App Store placed it at number one in the same 48-hour window. For a portion of the market, the presidential insult functioned as a product endorsement. Whether that dynamic represents a lasting shift in how consumers evaluate AI companies or a temporary backlash spike is a question the next few months of market share data will answer more reliably than any commentary can right now.

Anthropic did not publicly celebrate the App Store ranking or make statements capitalizing on the boycott. The company focused its public communications on the legal challenge. Palantir CEO Alex Karp stated publicly there was “never a sense” that Anthropic’s position was anti-military broadly, describing the dispute as being about specific narrow applications. His support, coming from a company with deep defense relationships, complicated the narrative that Anthropic was simply opposed to government AI contracts.

Sam Altman’s Response and Why the Critics Were Not Satisfied

Altman acknowledged in posts on X that the rollout looked “opportunistic and sloppy.” He later told CNBC the same. He committed to amending the contract with the government and announced that AI systems under the deal would not be used for domestic surveillance of US citizens. He stated OpenAI’s redlines as “no autonomous weapons and no autonomous surveillance.”

The critics made two specific arguments against those clarifications that are worth stating as clearly as the original statements. First, OpenAI’s contract language tied restrictions to “existing law and DoD policies,” which the government can change through executive action, whereas Anthropic’s original contract had used absolute language not subject to policy change. The practical difference between those two approaches becomes visible if future administrations or DOD policy changes in ways that make current protections obsolete. Second, the timing made the stated commitment difficult to credit regardless of the contract language, since Altman had publicly supported Anthropic’s position hours before signing the competing deal.

At least one senior OpenAI executive resigned over the deal. Caitlin Kalinowski, the hardware executive, departed and described it as “rushed without the guardrails defined.” Hundreds of employees at Google and OpenAI signed an open letter urging their leaders to refuse contracts involving autonomous weapons or domestic surveillance. The internal dissent was broader than the companies’ public positions had suggested.

Where Everything Stands as of March 20

Anthropic’s lawsuit challenging the supply chain designation is active with an amicus brief filed by over 30 industry professionals. ChatGPT reclaimed the number one App Store position on March 9. The Pentagon has moved toward working with OpenAI and xAI following the split with Anthropic. quitgpt.org now claims 4 million-plus actions by its own count, with the methodology combining multiple types of engagement as described above.

The broader market share context is useful background. ChatGPT’s share of daily US users had already fallen from 69.1 percent in January 2025 to 45.3 percent in January 2026, with Google Gemini growing from 14.7 percent to 25.1 percent in the same period according to Apptopia’s February report. The App Store surge for Claude happened against an already-shifting competitive landscape. OpenAI is approaching a potential public listing in late 2026 with annualized revenue above $25 billion. Anthropic is approaching $19 billion in annualized revenue. Both companies are growing faster than almost any enterprise software business in history. The Pentagon dispute is a significant story in the AI industry narrative but it has not materially changed either company’s fundamental financial trajectory based on publicly available data.

What This Actually Tells Us About the AI Industry in 2026

Several things about this episode are new enough to be worth naming directly.

AI model choice became a values-based consumer decision at meaningful scale for the first time. Every previous AI model switch had been driven by capability. The QuitGPT movement is the first time a significant number of users chose a technically comparable alternative specifically because of a company’s ethical stance rather than its technical performance. Whether you agree with the ethical reasoning behind the boycott or not, the behavior change itself is significant. It means AI companies now have reputational risk from policy decisions in the way consumer brands always have. That is a new constraint on how these companies can operate.

The civilian-military AI boundary is now a live public debate rather than an internal policy question. Before February 2026, discussions about AI in military applications happened primarily in policy circles, academic papers, and contractor negotiations. The Anthropic situation pushed those questions into mainstream consumer consciousness. The specific questions are not simple ones: should AI be used in autonomous weapons systems, what constitutes mass surveillance, whether private companies have the right to restrict how governments use technology they sell. They are now being asked by a much larger audience than previously engaged with them.

The App Store market response also demonstrated that AI market share is more fluid than the baseline numbers suggest. ChatGPT at 300 million weekly active users created an impression of secure incumbent dominance. A company ranked 42nd reaching number one in under three weeks, driven by consumer sentiment around a policy decision, suggests the switching costs are low enough that a sufficiently strong motivation creates rapid movement. That dynamic cuts both ways: it means Claude can gain fast, but it also means those gains can reverse just as quickly, as the March 9 rankings showed.

The Question Nobody Has Answered Yet

The dispute raised a question that nobody, including Anthropic, OpenAI, the Pentagon, or the commentators who wrote thousands of words about it, has answered cleanly: who decides how powerful AI systems can be used?

Anthropic’s position is that the company building the AI has the right and responsibility to set conditions on its use. The Pentagon’s position is that the government’s determination of what is lawful should be the only operative constraint. OpenAI’s position in practice appears to be that it will work within government-defined boundaries while negotiating specific protections it considers important. None of these positions is obviously correct, and each has implications that are uncomfortable in different ways.

If AI companies have effective veto power over government uses of their technology, then private companies are exercising power over national security decisions that no democratic process authorized. If governments have unlimited authority to require AI tools to support any lawful use, then the ethical commitments AI companies make publicly are worth nothing beyond their legality under current political conditions. If the answer is to negotiate case by case, then the outcome depends entirely on the specific leverage each party holds in each negotiation, which is exactly what just played out.

This question will keep coming up. The technology is too powerful, the government demand for it too strong, and the ethical stakes around specific applications too significant for this to be a one-time controversy that gets resolved and moves on. What played out publicly in late February 2026 is a preview of arguments that AI companies, governments, and citizens are going to be having for a long time.

Where do you land on this? Was Anthropic right to walk away from a $200 million government contract over two specific restrictions? Was OpenAI wrong to sign the contract the same day? Drop it in the comments. This is one worth the actual debate rather than just the hot takes.

References (March 20, 2026):

Sensor Tower uninstall data (295% day-over-day, Claude download surges): TechCrunch, Times of India, Business Standard

Fortune: “Anthropic’s Claude Overtakes ChatGPT in App Store”: fortune.com

Axios: “Anthropic got blacklisted by the Pentagon. Then Claude hit No. 1 in the App Store”: axios.com

eWeek: “ChatGPT Uninstalls Surge 295% After OpenAI Accepts Pentagon Contract”: eweek.com

quitgpt.org: Campaign figures (4M+ claimed actions as of March 20, methodology combines signatures, social shares, and app data): quitgpt.org

The Guardian: Rutger Bregman op-ed urging ChatGPT cancellation: theguardian.com

9to5Mac: “ChatGPT returns to the top of the App Store after DoD deal controversy” (March 9, 2026): 9to5mac.com

TechCrunch: “The biggest AI stories of the year so far” (Kalinowski resignation, open letters): techcrunch.com

ALM Corp: “Anthropic Rejects Pentagon Deal” (full timeline, contract language comparison): almcorp.com

Apptopia: ChatGPT market share data (69.1% to 45.3%), Gemini growth (14.7% to 25.1%), February 2026

ChatGPT got a Pentagon contract.

Claude got the App Store, briefly. The actual question is what both of those things mean for who you trust with your data.

Leave a Reply