Table of Contents

- Something Quietly Changed About How Networks Get Managed

- AIOps: What It Actually Means When AI Runs Your Network Operations

- Self-Healing Networks: The Specific Thing That Changed in 2025

- AI Traffic Management: From Scheduled Maintenance to Continuous Optimization

- Network-as-a-Service: Consuming Networks Like Cloud Compute

- The Skills Gap Nobody Warned Network Engineers About

- The Catch: Why AI Cannot Just Be Switched On

- What Real Deployments Look Like: Three Concrete Examples

- The Autonomy Spectrum: From Level 0 to Level 5

- Where This Leaves Network Engineers and Organizations in 2026

Something Quietly Changed About How Networks Get Managed

For most of the history of enterprise networking, the operational model was reactive. Something breaks, someone gets paged, a network engineer logs into the device, diagnoses the problem, and applies a fix. The network is documented in spreadsheets and Visio diagrams that are updated when someone remembers to update them, which is less often than the network actually changes. Configuration drift accumulates silently until something breaks in a way that takes three engineers six hours to untangle because nobody can agree on what the current state of the network actually is.

That model is being replaced. Not upgraded, not supplemented with a dashboard, but structurally replaced by something that operates differently at a fundamental level. AI-driven network operations, often called AIOps for networking, has moved from a category of vendor marketing claims into something that real organizations are running in production and citing specific operational outcomes from. IDC surveyed more than 500 IT decision-makers in early 2026 and found a significant increase in the percentage of network management tasks being handled by AI automation, with the trend expected to accelerate through 2028.

The transition is not happening uniformly. Some organizations have genuinely autonomous network operations running in specific domains. Most are somewhere in the middle: using AI for monitoring and anomaly detection while humans still make configuration decisions. A meaningful number are still running spreadsheet-driven operations with manual CLI configuration. The gap between these groups is widening, and where you fall on that spectrum increasingly determines whether you can hire the people you need, keep pace with the complexity of AI workloads, and compete with organizations that have invested in automation.

Understanding what AI-driven networking actually means in practice, beyond the vendor pitch, is what this article is about.

AIOps: What It Actually Means When AI Runs Your Network Operations

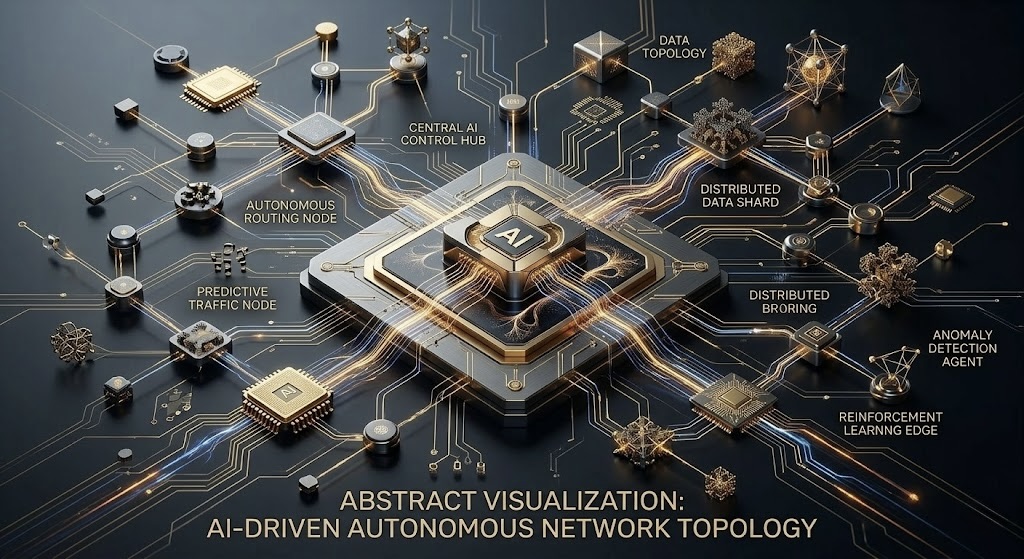

AIOps stands for Artificial Intelligence for IT Operations. In the networking context specifically, it refers to using machine learning and AI to automate the observation, analysis, and response functions that network operations centers traditionally handle with human staff. The definition sounds straightforward. The implementation is anything but, because the gap between “AI that helps humans make better decisions” and “AI that makes decisions and implements them autonomously” is enormous in both technical complexity and operational risk.

At the observation layer, AIOps collects telemetry from across the network at a volume and granularity that human operators cannot process manually. Modern networks generate enormous amounts of data: device metrics, flow records, syslog entries, SNMP traps, BGP route announcements, interface errors, application performance signals, and more. A human team monitoring all of this has to make constant decisions about what to look at and what to ignore, which means important signals routinely get missed until they become incidents. AI systems observe everything continuously, apply statistical models to identify anomalies, and surface the signals that matter while suppressing the noise that does not.

At the analysis layer, AIOps correlates events across different data sources to identify root causes rather than symptoms. A traditional network operations workflow for a complex incident involves pulling logs from multiple systems, correlating timestamps manually, and working backwards from the observable failure to find the underlying cause. This process takes time and depends on the individual engineer’s familiarity with the specific environment. An AI system that has been trained on the historical behavior of the network can perform the same correlation in seconds, flagging not just what broke but what caused it and what else might break as a consequence.

At the response layer, this is where the spectrum from human-assisted to autonomous begins to matter. An AIOps system that analyzes a problem and sends a Slack notification to the on-call engineer with a recommended fix is qualitatively different from one that implements the fix directly. Both exist. Both have their place. The right level of autonomy depends on the risk tolerance of the specific action, the confidence level of the AI’s diagnosis, and whether the action can be rolled back if it makes things worse.

Self-Healing Networks: The Specific Thing That Changed in 2025

The phrase “self-healing network” has been in networking vendor marketing since at least 2015. For most of that time it was aspirational. In 2025, enough real deployments accumulated enough operational data that the concept moved from aspiration to a thing organizations were actually pointing to in case studies with specific metrics attached. NCTA’s 2026 broadband connectivity report describes this shift explicitly, saying AI in 2026 is transitioning from reactive support tools to autonomous systems that can self-optimize, predict demand spikes, adjust capacity, and preempt performance bottlenecks.

Self-healing operates in a few specific ways depending on the network layer and the failure type. For link failures in networks with redundant paths, the response has been automated since OSPF and BGP were invented. Routing protocols reroute traffic around failures without human intervention. That is not new. What AI adds is the ability to detect that a link is degrading before it fails, analyze whether the degradation pattern suggests imminent failure versus temporary interference, and either preemptively shift traffic or alert human operators with enough lead time to take action before users notice anything.

For configuration-related failures, which are actually the most common cause of network outages, the self-healing dynamic is more complex. An AI system that can compare the current device configuration against the intended configuration, detect drift, and either flag the drift for human review or automatically remediate it is addressing the root cause of a significant fraction of network incidents. The challenge is that not all configuration drift is problematic. Sometimes a network engineer made a deliberate change that was not reflected in the documentation, and an AI system that aggressively rolls back changes to match a stale intended state would be disruptive. The sophistication in current implementations is in training the system to distinguish intentional changes from accidental ones, which requires enough historical context about who made changes, when, and through what process.

Application-layer performance self-healing is the most ambitious implementation and the one that requires the most mature data foundation. AI systems that observe application performance signals, correlate them with network metrics, identify that specific application flows are degrading because of congestion on a particular path, and automatically adjust Quality of Service policies or traffic routing to restore performance are running in production at some large enterprises and service providers. The operational prerequisite is having enough visibility into both network and application behavior that the correlation is reliable rather than coincidental.

AI Traffic Management: From Scheduled Maintenance to Continuous Optimization

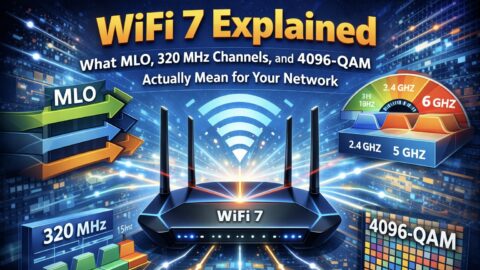

Traditional network traffic management operated on a planning cycle. Network engineers analyzed capacity reports periodically, identified where utilization was approaching thresholds, planned capacity upgrades, scheduled maintenance windows, and implemented changes in batches. The network’s configuration reflected decisions made weeks or months ago based on data that was already slightly stale when the analysis was done. In a network where traffic patterns change slowly and predictably, this worked adequately.

AI workloads broke this model. Training jobs generate traffic bursts that can saturate core links for hours, then disappear completely when the job ends. Inference traffic grows steadily for weeks as a new AI service scales, then can spike dramatically when a model becomes popular. The temporal patterns of AI traffic are fundamentally different from the relatively smooth diurnal patterns of traditional enterprise traffic that capacity planning tools were calibrated for.

AI-driven traffic management addresses this by treating optimization as a continuous process rather than a periodic one. Systems that analyze real-time flow data across the network, predict traffic demands based on scheduled jobs and observed trends, and proactively adjust routing policies and traffic engineering parameters before congestion develops are the current state of the art at hyperscalers and advanced enterprise networks. NCTA describes specific implementations at cable operator networks where AI platforms analyze latency, spectrum usage, and device density in real time to maximize throughput and reliability, with the explicit capability to anticipate demand spikes before they happen.

The practical difference between reactive and predictive traffic management is most visible during the transition from normal operations to high-demand periods. A reactive system detects congestion after packets are being dropped, triggers rerouting, and restores performance after a delay. A predictive system identifies that a large training job is scheduled to start in 45 minutes, pre-positions traffic engineering changes to create capacity for it, and the training job’s traffic flows through a pre-cleared path without encountering congestion at all. The training job runs faster, the other traffic sharing the network is less affected, and nobody had to wake up a network engineer to make it happen.

Network-as-a-Service: Consuming Networks Like Cloud Compute

Network-as-a-Service, usually abbreviated as NaaS, is the model where enterprises consume networking capacity as an on-demand service rather than owning and managing physical infrastructure. It is the network equivalent of what public cloud did to compute: instead of buying servers and managing them, you pay for instances when you need them and release them when you do not. Instead of buying switches, routers, and WAN links and managing their lifecycle, you pay for connectivity capacity that is provisioned through software APIs and billed based on usage.

Three API standards organizations are currently competing to define NaaS interoperability, and the one that wins will significantly shape how enterprises consume network services for the next decade. GSMA and the CAMARA initiative are focusing on programmable mobile and radio access networks. MEF’s LSO Sonata APIs are establishing themselves as the standard for east-west connectivity between service providers. TM Forum is addressing north-south integration across operational and business support systems and vendor infrastructure. Console Connect’s CTO Paul Gampe, in a January 2026 analysis, described the current state as “growing alignment that reduces fragmentation,” which is progress from the fully fragmented landscape of 2024 but not yet the unified standard that would allow true cross-provider programmability.

The AI connection to NaaS is what makes the model particularly relevant in 2026. AI training workloads frequently need to move massive datasets between locations, synchronize data across clouds, and support federated learning scenarios where model training happens across geographically distributed infrastructure. These use cases demand connectivity that is deterministic: no jitter, no congestion, no unpredictable latency. Packet-switched networks, where traffic shares paths with other flows and competes for capacity, cannot always provide this determinism. Transmission-on-demand, where a specific capacity path is reserved between two points for the duration of a job, addresses it directly. NaaS platforms that can provision this kind of deterministic connectivity through APIs, automatically and on-demand, are the WAN equivalent of what GPU reservations are in the compute world.

The adoption curve for NaaS is still in the early stages for most enterprises. The most aggressive adopters are organizations with significant multi-cloud footprints and highly variable connectivity requirements, which is an accurate description of most AI-first companies. For traditional enterprises with relatively stable connectivity needs and existing WAN contracts, the transition economics are not yet compelling enough to accelerate adoption. That is expected to change as the NaaS platforms mature and as more enterprise workloads develop the bursty, unpredictable connectivity patterns that make on-demand consumption more efficient than reserved capacity.

The Skills Gap Nobody Warned Network Engineers About

The transition to AI-driven networking is creating a specific skills gap that is worth naming directly. The gap is not between people who know networking and people who know AI. It is between network engineers who understand how their networks behave at a statistical level and can work with automated systems that act on that behavior, versus engineers whose skills are primarily in CLI configuration and reactive troubleshooting.

Robert Half research cited in NetworkWorld’s 2026 trends analysis found that 87 percent of IT leaders are willing to pay higher salaries to candidates who bring specialized skills to roles that previously paid standard rates. The specific skills commanding premiums in networking are automation and programmability, specifically Python scripting and network automation frameworks, cloud networking across at least one major provider, security-integrated network design, and enough data literacy to work with the telemetry and analytics platforms that AIOps systems produce. These are not exotic skills, but they are different from the CCNA-level knowledge that used to be the baseline for network operations roles.

The concern that network engineering roles will disappear because AI can handle routine operations is partially correct and mostly wrong. Routine configuration tasks, monitoring dashboard-watching, and reactive incident response for common failure modes are increasingly being automated. Those specific task categories are genuine candidates for reduction. What grows as networks become more complex and more automated is the need for engineers who can design the automation, validate that it is behaving correctly, handle the exceptions that fall outside the automation’s training distribution, and architect the overall system with security and reliability requirements in mind. None of those skills are ones that current AI can reliably provide.

The engineers who are building skills in network automation now, even at relatively basic levels like Ansible playbooks for configuration management and Python scripts for telemetry collection, are positioning themselves better than those waiting to see how the technology develops. The wait-and-see approach has a cost because the skill gap is already visible in compensation data. NetworkWorld’s trend analysis noted that networking roles continue to trend upward in compensation specifically because organizations need people who can design and safeguard modern infrastructure, and those people are increasingly difficult to find.

The Catch: Why AI Cannot Just Be Switched On

Every major analysis of AI in networking in 2026 includes a version of the same caveat: AI for network management requires a data foundation that many organizations do not have and cannot build quickly. The catch is not the AI itself. The catch is the prerequisite infrastructure for the AI to have reliable, complete data to work with.

AI-driven network operations are only as good as the telemetry they receive. A network that generates incomplete SNMP data because some devices are on old firmware, sends syslog output to a collector that occasionally drops events under load, and has flow data that covers 70 percent of traffic because some switches have it disabled for performance reasons cannot support reliable AI-driven anomaly detection. The model will have blind spots that correspond exactly to the gaps in its data, and those blind spots are not visible to the people relying on it because the AI does not know what it does not know.

This prerequisite gap is the primary reason why organizations that try to implement AIOps without first investing in network observability get poor results and often conclude that AIOps does not work. NetworkWorld’s 2026 trends analysis captures this precisely: AI cannot just be switched on, and the investment in data infrastructure frequently needs to precede the investment in AI tooling by a year or more. Enterprises that built comprehensive observability, with full telemetry coverage, a normalized data model, and reliable collection infrastructure, before attempting to apply AI to network operations are the ones posting the case study numbers.

Configuration management is the other prerequisite that consistently surprises organizations. AI systems that manage network configuration need an accurate representation of the intended state of the network to compare against the actual state. If the intended state is documented inconsistently, spread across multiple systems with conflicting information, or simply not documented for portions of the network that were built informally, the AI has no reliable baseline to detect drift against. Infrastructure as code practices, where network configuration is defined in version-controlled files and applied through automated pipelines, provide the foundation that AI-driven configuration management requires. Organizations that have not adopted these practices find that the first step toward AI-driven networking is actually building the GitOps-style configuration management that should have been there already.

What Real Deployments Look Like: Three Concrete Examples

Looking at specific production deployments is more useful than discussing the technology abstractly, because the gap between what the vendor demos show and what production operations actually look like is significant in this category.

Cable operators running DOCSIS 4.0 networks are among the most advanced deployments of AI-driven traffic management in consumer networking. The NCTA’s 2026 broadband report describes implementations where AI platforms continuously analyze spectrum usage across the HFC plant, identify interference patterns before they cause service degradation, and automatically adjust channel configurations to maintain performance. The operational advantage is measurable in reduced truck rolls, faster mean-time-to-repair for intermittent issues that are difficult to diagnose retrospectively, and improved spectrum utilization compared to static channel plans. Agentic AI systems handling service assurance at scale are described as a 2026 operational reality rather than a roadmap item for these operators.

Enterprise campus networks running Cisco Catalyst Center or Juniper Mist AI represent the most common production AIOps deployment for organizations that are not hyperscalers. Both platforms collect telemetry from wireless and wired infrastructure, apply machine learning to establish baselines for normal behavior, and generate alerts when client experience metrics deviate from those baselines. The Juniper Mist implementation is particularly notable because it uses large language model interfaces for network troubleshooting: network engineers can describe a problem in natural language and receive an analysis of relevant telemetry, suggested root causes, and recommended actions. The system does not autonomously make configuration changes in most deployments. It compresses the diagnostic workflow significantly, reducing the time from incident report to root cause identification from hours to minutes in documented customer cases.

AI data center fabric management at hyperscale is where the most aggressive autonomy exists, and also where it is hardest to get external visibility into what is actually running. Amazon, Google, and Microsoft all operate network management systems that make autonomous decisions about traffic routing, capacity allocation, and failure response at a scale where human reaction time would be inadequate. The 100,000-node Kubernetes clusters discussed in CyberDevHub’s KubeCon article earlier this week depend on network fabrics that manage themselves at a speed and granularity that manual operations cannot provide. What is publicly known about these systems comes primarily from academic papers published by researchers at those companies, which describe the general architecture without exposing operational details.

The Autonomy Spectrum: From Level 0 to Level 5

The networking industry has adopted a level-based framework for describing network automation maturity that is analogous to the autonomous vehicle levels used by SAE International. This framework is useful for evaluating where a specific organization or product sits on the autonomy spectrum and what the path to greater autonomy looks like.

| Level | Name | What the Human Does | What the System Does | Where Most Enterprises Are |

|---|---|---|---|---|

| 0 | Manual | Everything: monitoring, analysis, configuration, remediation | Nothing autonomous | Legacy networks, smaller organizations |

| 1 | Assisted | All decisions and actions, but with AI-generated alerts and recommendations | Monitors, detects anomalies, surfaces alerts with context | Most mid-size enterprises with basic AIOps tooling |

| 2 | Partial Automation | Approves automated actions, handles exceptions and complex changes | Executes defined remediation playbooks for known failure patterns | Advanced enterprises, managed service providers |

| 3 | Conditional Automation | Monitors system behavior, intervenes when autonomy limits are reached | Handles most routine operations including complex multi-step remediations | Leading enterprises, progressive service providers |

| 4 | High Automation | Sets policy and objectives; handles genuinely novel situations | Operates autonomously across most conditions, learns and adapts | Hyperscalers, most advanced telcos |

| 5 | Full Autonomy | Defines business intent; the network handles all technical implementation | Fully self-managing, self-optimizing, self-healing | Theoretical target, no production deployments at this level |

Most enterprise organizations sit between Level 1 and Level 2 as of early 2026. The organizations that have publicly described advancing to Level 3 have done so in specific network domains, typically wireless campus or WAN edge, rather than across their entire network. The jump from Level 2 to Level 3 is where the data foundation requirements become most demanding, because the system needs enough confidence in its own diagnosis to take multi-step remediation actions without human approval. The jump from Level 3 to Level 4 is where organizational trust in the system’s judgment becomes the primary constraint rather than technical capability.

Where the industry is heading: IDC projects that by 2028, more than 40% of enterprise network management tasks will be handled autonomously without human intervention, up from the current baseline where most organizations are in the 10-20% range. That projection assumes continued investment in the observability and configuration management foundations that make AI-driven operations reliable. Organizations that build those foundations now are positioned to cross the 40% threshold. Those that do not will find the gap between their operational maturity and the competition growing in both directions: technology complexity up, available skilled labor to manage it manually becoming scarcer.

Where This Leaves Network Engineers and Organizations in 2026

The shift to AI-driven networking is not a future event. It is an ongoing transition that is already affecting job descriptions, product purchasing decisions, and competitive differentiation between organizations. The question is not whether your network will be AI-managed eventually. The question is whether you are building toward that outcome intentionally or discovering you need to catch up after falling behind.

For network engineers specifically, the most actionable near-term investment is learning one network automation framework at a practical level. Ansible for network configuration management is the most broadly applicable starting point and has the largest community of practice to learn from. Python with Netmiko or Nornir for network scripting is the natural next step. Terraform for network infrastructure as code is relevant if your organization uses it for other infrastructure. None of these require abandoning existing networking knowledge. They are additions to it that make that knowledge much more applicable to how networks are actually being managed in 2026.

For organizations evaluating AIOps tools, the evaluation criterion that matters most is telemetry coverage rather than feature lists. Ask any vendor presenting an AIOps platform to specify exactly what data it needs to function reliably and how complete that data needs to be before the AI delivers the outcomes shown in the demo. Then honestly assess what percentage of your current infrastructure can provide data at that quality. The gap between those two numbers is the real implementation timeline, and it is usually longer than the vendor’s sales cycle suggests.

For students and early-career engineers, the market signal from the compensation data is clear and consistent. Organizations are paying premiums for people who understand both networking fundamentals and automation. The path through CCNA-level fundamentals into network automation skills, Python for network scripting, and some exposure to cloud networking is a more differentiated skillset in 2026 than CCNA alone would have been in 2019. The tools for learning this path, Cisco’s DevNet training, the NetworkProgrammability.io community, and the open-source automation frameworks themselves, are widely available.

The networks of 2026 are more capable, more complex, more AI-managed, and more valuable to the organizations that depend on them than anything that existed five years ago. The engineers who understand both how they work and how to work with the systems that manage them are the ones who will be in demand for the next decade.

What level on the autonomy spectrum would you say your organization’s network sits at right now? And what is the biggest obstacle to moving higher? The answers in the comments tend to be more honest than the vendor case studies, which is why I find them more useful.

References (March 2026):

NetworkWorld: “8 Hot Networking Trends for 2026” (IDC 500+ survey, Robert Half compensation data, AIOps maturity): networkworld.com

NCTA: “Broadband in 2026: Emerging Trends in Networks and Connectivity” (autonomous AI networks, DOCSIS 4.0, agentic AI): ncta.com

Auvik: “Future of Networking Technology: Trends for 2026” (AIOps, identity-first security, TLS 1.3 dominance): auvik.com

Console Connect CTO blog: “Three Key Tech Trends Shaping Connectivity in 2026” (NaaS, transmission-on-demand, MCP): consoleconnect.com

Monogoto: “Top Connectivity Trends for 2026: What Enterprises Need to Know” (autonomous agents, NaaS, zero trust at network layer): monogoto.io

INE: “Top 5 Networking Trends of 2026” (skills gap, cross-trained teams): ine.com

BCC Research: Data center networking market $46B (2025) to $103B (2030): via NetworkWorld February 2026

Advantage CG: “Top Network Trends Shaping Global Enterprises in 2026” (LEO satellite, NaaS enterprise adoption): advantagecg.com

For thirty years, networks broke and engineers fixed them.

In 2026, the networks are starting to fix themselves. The engineers who understand why are the ones still needed.

Leave a Reply