Table of Contents

- The Zoom Call Where Every Person on Screen Was Fake

- What Changed: Real-Time Is the New Threat

- How Easy It Is to Build a Deepfake of Your CEO Right Now

- The Numbers: $200M in Q1 2025, 400 Companies Per Day, 1,740% Growth

- The Ferrari CEO Call That Almost Worked

- Why Finance Teams Are the Primary Target

- The Detection Problem: Humans Spot Deepfakes Only 24.5% of the Time

- Deepfake-as-a-Service: Anyone Can Do This for $20 a Month

- What Actually Works: The Defenses That Stop Real Attacks

- The Uncomfortable Truth About Where This Is Going

The Zoom Call Where Every Person on Screen Was Fake

In March 2025, a finance director at a multinational company in Singapore joined what appeared to be a routine video call with senior leadership. The CFO was there. Several other executives appeared on screen. Everyone looked right. Everyone sounded right. The finance director listened to the urgent request for a $499,000 fund transfer to facilitate a confidential acquisition and authorized it.

Not a single person on that call was real. Every face was a deepfake. Every voice was AI-generated. The entire meeting had been fabricated using publicly available footage and audio of the actual executives. By the time the company discovered the fraud, the money was gone.

This was not a one-off incident. A year earlier, in February 2024, a finance worker at Arup, the global engineering firm, authorized 15 separate wire transfers totaling $25.5 million after a similar fabricated video call. The attackers had used publicly available footage of the CFO and colleagues to construct an entire fake meeting that lasted long enough for the employee to authorize the transfers. Arup lost more money to a fake video call than most companies spend on their entire security budget in a year.

The pattern has since become a category. Business email compromise, the attack where criminals impersonate executives to request wire transfers, used to rely on convincing emails. It now relies on convincing video calls where the executive appears in person, in real time, responding naturally to whatever the victim says. The entire category of “verify by video call” as a security control has been effectively neutralized.

What Changed: Real-Time Is the New Threat

Until about 18 months ago, deepfakes required significant preparation time. An attacker needed to collect footage, process it through a model, render the output, and send it as a pre-recorded video. That pipeline took hours to days. The resulting video was used in one specific way and could not adapt to unexpected questions or follow-up requests. The threat was real but constrained by the production timeline.

That constraint is gone. Real-time deepfakes allow attackers to actively impersonate individuals during live interactions, adapting in real time to whatever the victim says. Investigative reporting from 404 Media and confirmed by multiple cybersecurity firms revealed that scammers are now using tools like DeepFaceLive, Magicam, and Amigo AI to alter their face, voice, gender, and even accent during live video calls. A fraudster can match their manipulated appearance to a government-issued ID in real time, respond naturally to questions, and maintain the deception across an extended conversation.

Veriff’s 2025 Identity Fraud Report documented a 46 percent year-over-year increase in adversary-in-the-middle attacks, many of them real-time deepfakes. The attacks are no longer relying on rehearsed scripts. Attackers are improvising, adapting, and responding to whatever the victim asks, maintaining the illusion through dynamic generation rather than pre-rendered footage. That shift from pre-recorded to real-time is the specific development that moved deepfake CEO fraud from a notable attack category to a persistent, daily operational threat for large organizations.

How Easy It Is to Build a Deepfake of Your CEO Right Now

The barrier to entry for deepfake attacks has effectively collapsed. Understanding how low it now is explains why the attack frequency numbers have grown at the rate they have.

For voice cloning, modern AI tools require as little as three seconds of clear audio to create a voice clone with an 85 percent match to the original speaker’s characteristics. Higher-quality clones that capture subtle vocal patterns, including accent, speech cadence, and emotional tone, require 10 to 30 seconds of audio. The CEO of any publicly traded company has hours of earnings call recordings, conference presentations, and interview footage publicly available. The CEO of any company whose executives appear on LinkedIn, YouTube, or in press coverage provides sufficient source material. A public company’s investor relations page is an attacker’s resource library.

For video deepfakes, modern tools need footage of the target from multiple angles and in different lighting conditions, but company websites, conference recordings, news appearances, and social media profiles routinely provide this. The AI analyzes facial geometry and movement patterns from the available footage and generates synthetic video with synchronized lip movements and natural expressions. What required a specialized machine learning engineer two years ago is now available through point-and-click interfaces at subscription prices comparable to Netflix.

Deepfake-as-a-Service platforms, discussed in more detail below, have turned this into a commercial ecosystem. Technical skill is no longer a limiting factor. Motivation and a target are sufficient.

The Numbers: $200M in Q1 2025, 400 Companies Per Day, 1,740% Growth

The scale of deepfake-enabled CEO fraud in 2025 and 2026 is large enough that the numbers require careful attribution rather than being thrown out as general alarming statistics.

Financial losses from deepfake-enabled fraud exceeded $200 million in North America in the first quarter of 2025 alone, according to data cited by the World Economic Forum and confirmed across multiple security research sources. Deloitte and Cyble project that AI-enabled fraud losses will reach $40 billion in the United States by 2027, growing at a 32 percent compound annual rate from the $12.3 billion baseline in 2024.

CEO fraud deepfake attacks now target at least 400 companies per day according to Security.org’s analysis of available incident data. That figure represents confirmed targeting events, not successful fraud. Many attacks are detected or fail to result in losses. But the volume represents a sustained, industrialized attack operation running continuously against corporate targets, not a collection of sophisticated one-off incidents by skilled individual attackers.

The growth trajectory is the most alarming data point. Deepfake fraud incidents increased tenfold between 2022 and 2023, according to Sumsub. North America specifically saw a 1,740 percent increase in deepfake fraud between 2022 and 2023. The Asia-Pacific region saw a 1,530 percent increase in the same period. In Q2 2025, Resemble AI tracked 487 discrete deepfake incidents, representing a 317 percent increase compared to earlier in 2025 and a 1,500 percent increase since 2023. The volume is not plateauing. It is still accelerating.

The awareness gap that makes this worse: 25% of executives have little or no familiarity with deepfake technology according to Programs.com 2026 data. 32% of company leaders have no confidence their employees could recognize a deepfake fraud attempt. Only 13% of companies have any anti-deepfake protocols in place. The attacks are scaling faster than organizational awareness of them.

The Ferrari CEO Call That Almost Worked

Not every documented attack succeeded, and the ones that failed provide the most useful defense intelligence. In one of the most reported near-miss cases, fraudsters attempted to impersonate Ferrari CEO Benedetto Vigna through an AI voice clone that accurately replicated his distinctive southern Italian accent. The attack targeted a senior Ferrari executive through a phone call. The voice sounded right. The accent was convincing. The request was framed around a confidential acquisition requiring urgent financial authorization, the same framing used in the Arup and Singapore attacks.

The call was terminated before any money moved because the Ferrari executive asked the caller a personal question that only Vigna would know the answer to. The attacker could not respond correctly and the fraud collapsed. That detail is both encouraging and sobering. Encouraging because the control worked. Sobering because the only thing between Ferrari and a significant financial loss was one executive’s decision to ask a personal verification question, not a formal security protocol.

Similar attempts have been documented against WPP CEO Mark Read and executives at multiple other major companies across industries. The attackers are systematically working down the list of prominent executives whose voice and video are publicly available, testing which organizations have verification protocols in place and which do not. The organizations without protocols are the ones losing money.

Why Finance Teams Are the Primary Target

Deepfake CEO fraud overwhelmingly targets finance teams rather than other departments, and the reason is not complicated. Finance teams can move money directly. They have the authority to approve wire transfers and payments. They handle urgent transactions regularly, which means an unusual-seeming request for speed is not inherently suspicious in their workflow context. And they are specifically trained to respond to executive requests efficiently, which attackers exploit as a social dynamic.

The attack chain that produced the $25.5 million Arup loss and the $499,000 Singapore loss followed the same pattern: an urgent request from an executive-level figure, framed around a confidential acquisition or time-sensitive deal, communicated through a channel the target believed was secure (video call), requesting a transfer that was large but within the authority of the target to approve. Every element of that pattern is specifically calibrated to bypass the mental checks that would normally slow down a financial authorization.

The “verify by video call” convention, which finance teams widely adopted specifically as a deepfake defense, has become a vulnerability rather than a protection. Employees who ask to verify by video call before authorizing an unusual request are now being given a deepfake video call as the “verification.” The control was designed for a world where video could be trusted. It is being used in a world where video cannot. Organizations that have not updated their verification protocols to account for real-time deepfakes are running a security control that creates false confidence rather than actual protection.

The Detection Problem: Humans Spot Deepfakes Only 24.5% of the Time

Human detection of deepfakes is worse than most people believe, and the data on this is consistent enough across multiple studies to take seriously as a baseline assumption rather than a pessimistic edge case.

High-quality deepfake videos are correctly identified as fake by human viewers only 24.5 percent of the time according to research cited by both Keepnet and Eftsure. That means trained human judgment fails roughly three out of four times when evaluating a high-quality fake. For audio deepfakes, a University of Florida study found participants claimed 73 percent accuracy in identifying fake voices but were actually fooled significantly more often in practice. A 2025 iProov study found that only 0.1 percent of participants correctly identified all fake and real media shown to them across a test set.

The self-assessment problem compounds the detection problem. Around 60 percent of people believe they could successfully spot a deepfake video according to survey data. The actual detection rate of 24.5 percent means confidence is dramatically higher than ability. People who are confident they can detect deepfakes are the most likely to be successfully deceived by them, because confidence reduces the cognitive scrutiny they apply during a call.

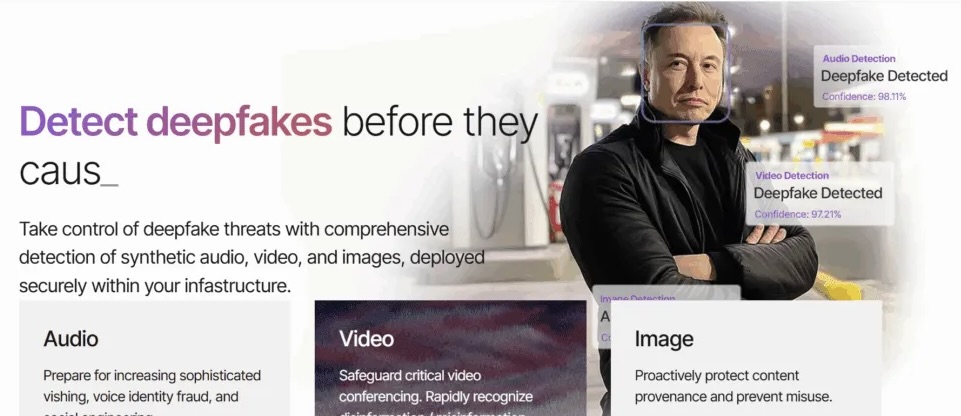

AI detection tools are improving but face their own limitations. The effectiveness of defensive AI detection tools drops by 45 to 50 percent when applied to real-world deepfakes outside controlled lab conditions, according to data from Keepnet. The detection market is growing at 28 to 42 percent annually, but the attack quality is improving in parallel. Gartner predicts that by 2026, 30 percent of enterprises will no longer consider standalone identity verification and authentication solutions reliable in isolation, specifically because of deepfake capabilities.

This does not mean detection is useless. It means detection cannot be the primary or sole defense. Organizations that rely on “someone will notice” as their deepfake defense are building on an assumption the data does not support.

Deepfake-as-a-Service: Anyone Can Do This for $20 a Month

The Deepfake-as-a-Service category emerged at commercial scale in 2025 and represents one of the most significant changes in the threat landscape. Before DaaS, executing a convincing deepfake CEO fraud attack required either significant technical skill or access to skilled developers. That friction kept the attack category relatively contained to organized crime groups and nation-state actors with resources to invest in capability development.

DaaS platforms offer ready-to-use tools for voice cloning, video face-swapping, and real-time persona simulation at subscription prices accessible to essentially any motivated attacker. The Singapore attack where fraudsters impersonated executives in a video call was attributed to a DaaS-equipped operation by Cyble’s Executive Threat Monitoring report. The technical barrier that previously separated sophisticated attackers from unsophisticated ones no longer exists in this attack category.

Open-source tools on GitHub, including DeepFaceLab and Avatarify, are freely available. Paid DaaS platforms offer polished interfaces, customer support, and iterative improvement that makes producing convincing output accessible to users with no technical background. Dark web forums have documented increasing conversation about using these tools specifically for identity verification bypass and corporate fraud since at least 2024. The commercial ecosystem around deepfake attacks is mature, competitive, and growing.

What Actually Works: The Defenses That Stop Real Attacks

The Ferrari near-miss is the most useful defense case study because it shows what stopped the attack: a personal verification question the attacker could not answer. That is a low-tech control that works specifically because it requires knowledge that is not publicly available. The attacker had access to everything about Vigna that was publicly documented. They did not have access to whatever personal reference the executive used to verify identity.

Pre-arranged code words for financial authorization requests are the most consistently recommended procedural control across security researchers and practitioners who study this attack category. Before deepfake fraud became a category, code words were considered an overly cautious measure. They are now standard in organizations that have taken the threat seriously. A code word system works as follows: any executive-originated request for financial authorization above a certain threshold requires the requesting party to provide a pre-arranged code word that was established out-of-band before any request was made. An attacker who clones a CEO’s voice and video perfectly still cannot provide a code word they do not know.

Callback verification using a known, pre-registered number is the second procedural control. If you receive a request through any channel, including video call, you hang up and call back using a number you have independently verified as belonging to that person, not a number provided in the suspicious communication. This breaks the social engineering chain regardless of how convincing the deepfake is, because the callback goes to the real person’s actual device.

Multi-person authorization requirements for large transfers address the risk that a single employee can be manipulated. If any wire transfer above a threshold requires approval from two separate individuals who must both independently verify the request, a single-target deepfake attack cannot succeed without simultaneously compromising two separate employees. That is a meaningfully harder attack to execute.

Technical controls that complement these procedural ones include liveness detection systems that specifically test for deepfake indicators during video calls, voice analysis tools that flag AI-generated speech characteristics, and anomaly detection that flags behavioral inconsistencies such as requests made outside normal business hours or through unusual channels. These technical controls are valuable but the data on detection rates above suggests they should be layered with procedural controls rather than treated as standalone solutions.

Employee training specifically on deepfake awareness is the foundational control that makes all the others more effective. The 25 percent of executives with little or no familiarity with deepfake technology are the most vulnerable targets. An executive who has never heard of real-time deepfake video calls will not think to ask a personal verification question when they receive one. Training does not need to be technical. It needs to convey one message clearly: video calls can no longer be trusted as verification, and any financial request requires out-of-band confirmation through a separate channel and a pre-arranged code.

The Uncomfortable Truth About Where This Is Going

Deepfake CEO fraud is not a problem that is going to be solved by better detection technology, at least not in any near-term timeframe that organizations can plan around. The attack quality and the detection quality are both improving, but the attack is improving faster and has the structural advantage that it only needs to succeed once while defenses need to succeed every time.

The organizations that have avoided losses are not the ones with the most sophisticated AI detection tools. They are the ones that implemented low-tech procedural controls: code words, callback verification, multi-person authorization. These controls work specifically because they do not depend on detecting whether something is a deepfake. They require out-of-band knowledge that no amount of voice cloning or face replication can provide. The attacker can sound exactly like the CEO, look exactly like the CEO, and respond naturally to every question. They still cannot provide the code word established last Tuesday in person.

Deloitte’s projection of $40 billion in AI-enabled fraud losses by 2027 implies the problem continues to scale over the next two years regardless of what the security industry does to counter it. That projection is based on current growth rates, not a worst-case scenario. The organizations that implement procedural verification controls now are insulating themselves from losses that competitors without those controls will absorb.

Fraud losses from generative AI are projected to grow from $12.3 billion in 2024 to $40 billion by 2027 at a 32 percent annual growth rate. The math on that trajectory is simple and the implication is direct: every year this problem is not addressed at the procedural level, the expected cost of not addressing it grows by roughly a third. The call your CFO receives next month may sound exactly like your CEO. It may look exactly like your CEO. The only question is whether your organization has a way to verify that is not fooled by a perfect imitation.

Does your organization have a pre-arranged code word system for financial authorization requests? Drop your current verification approach in the comments. The range of what organizations have and have not implemented is genuinely wide, and hearing what is actually in place is more useful than any vendor survey.

References (March 21, 2026):

World Economic Forum: “Detecting dangerous AI is essential in the deepfake era” ($200M Q1 2025, Arup $25.5M, Ferrari/Vigna near-miss, WPP/Mark Read): weforum.org

Keepnet Labs: “Deepfake Statistics and Trends 2026” (400 companies/day, 24.5% human detection, 1,740% NA growth, $200M Q1 2025): keepnetlabs.com

Brightside AI: “Deepfake CEO Fraud: $50M Voice Cloning Threat to CFOs” (Singapore $499K case, 3-second voice clone, finance team targeting): brside.com

Veriff: “Real-time deepfake fraud in 2025” (46% YoY adversary-in-middle increase, DeepFaceLive/Magicam/Amigo AI tools, real-time impersonation): veriff.com

Cyble: “Deepfake-as-a-Service Exploded in 2025” (30% of high-impact corporate impersonation attacks involve deepfakes, Singapore DaaS case): cyble.com

Programs.com: “The Latest Deepfake Facts and Statistics 2026” (25% executives unfamiliar, 317% Q2 2025 increase, 1,500% since 2023): programs.com

Eftsure: “Deepfake Statistics 2025: 25 New Facts for CFOs” ($680K average enterprise loss, 32% leaders no confidence in employee detection): eftsure.com

Deepstrike: “Deepfake Statistics 2025” (voice cloning 680% rise, 3,000% surge, 704% IDV bypass): deepstrike.io

Deloitte and Cyble (AI fraud $12.3B 2024 to $40B 2027 at 32% CAGR): cited across multiple security research publications

Gartner (30% of enterprises will not consider standalone IDV reliable by 2026): Gartner security research 2025

Your CEO’s voice can be cloned in three seconds. Their face in 45 minutes.

The only defense that reliably works does not involve detecting the fake. It involves verifying through a channel the fake cannot access.

Leave a Reply