Sources: Nvidia Newsroom, CNBC, Tom’s Hardware, TechCrunch, VideoCardz as of March 15, 2026. GTC 2026 keynote is scheduled for March 16. This article covers confirmed Vera Rubin facts and what to watch for tomorrow.

Table of Contents

- The Biggest Nvidia Event of the Year Is Tomorrow

- What We Already Know: Vera Rubin Is Confirmed and In Production

- Six Chips, One Platform: What the Rubin Stack Actually Looks Like

- The Performance Numbers and What They Mean

- The Token Cost Story: Why 10x Cheaper Matters More Than 5x Faster

- Fanless, Cableless, Five-Minute Install: The Physical Design Changed Too

- Who Is Getting Vera Rubin First

- The “World-Surprising” Chip: What Jensen Huang Has Been Hinting At

- The Competition Is Closer Than It Was a Year Ago

- What to Actually Watch For in Tomorrow’s Keynote

The Biggest Nvidia Event of the Year Is Tomorrow

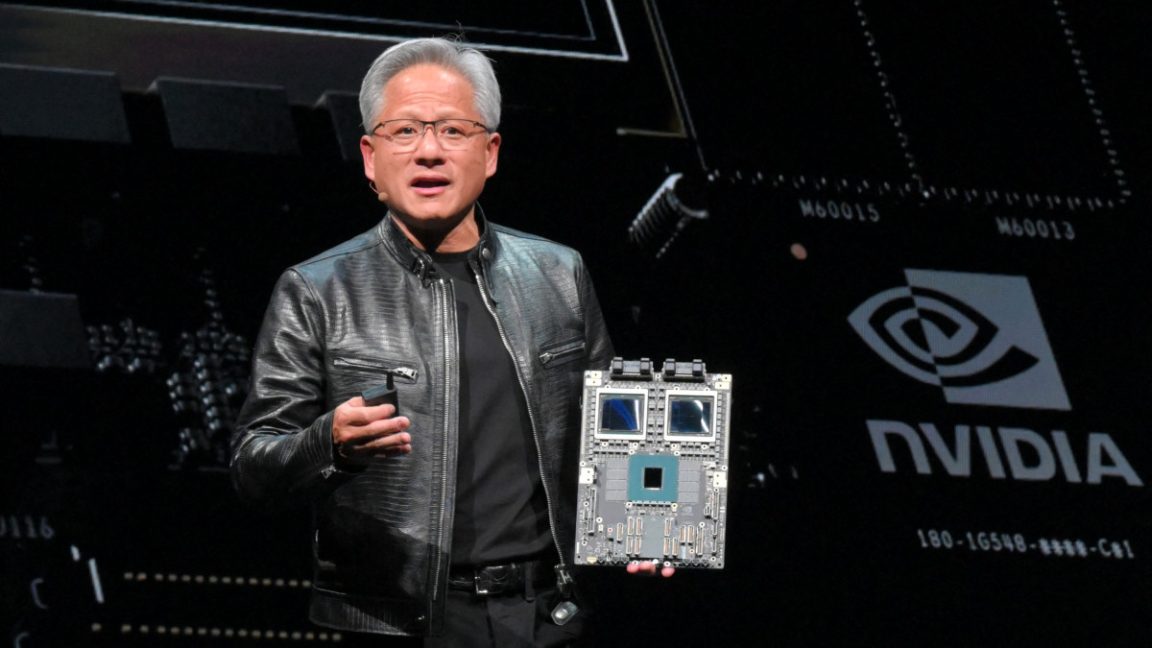

Nvidia’s GTC developer conference starts tomorrow, March 16, at the SAP Center in San Jose. Jensen Huang takes the keynote stage at 11am Pacific. If you have followed AI hardware for the past three years, you already know that GTC has quietly become one of the most consequential announcements in the technology industry, not just the chip industry. The 2023 GTC was where H100 scaling became visible to everyone. The 2025 GTC was where Vera Rubin was first unveiled. This year, Vera Rubin is in full production and Huang has been publicly teasing something he described as “a chip that will surprise the world.”

Whether that turns out to be what analysts think it is, we will know in roughly 24 hours. But there is already a significant amount of confirmed, sourced information about what is arriving, and it is worth understanding before the keynote rather than after. The announcements at these events move fast and the coverage afterward tends to either over-hype or miss the actually important details in favor of the marketing language.

This article covers what is already confirmed about the Vera Rubin platform from Nvidia’s own newsroom and independent technical reporting, what the performance numbers actually mean in practical terms, who is getting the hardware first and when, and what specifically to watch for in tomorrow’s keynote that the pre-conference coverage has mostly missed.

What We Already Know: Vera Rubin Is Confirmed and In Production

The Vera Rubin platform entered full production earlier this year. Jensen Huang confirmed this during his CES 2026 keynote in January in Las Vegas, and Nvidia’s own newsroom has published full technical details. This is not a product that is being teased for a future ship date. It is in manufacturing now, partners have received early samples, and the first commercial deployments are scheduled for the second half of 2026 at AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure, along with AI cloud providers CoreWeave, Lambda, Nebius, and Nscale.

The platform is named after Vera Florence Cooper Rubin, the American astronomer who discovered evidence for dark matter in the 1970s by observing that the outer stars of galaxies rotate at the same speed as inner ones, something that only makes sense if there is invisible mass distributed throughout the galaxy holding them in place. She spent years being largely ignored by the astronomy establishment before her work was accepted as one of the most important cosmological discoveries of the twentieth century. Nvidia has a pattern of naming platforms after scientists who made foundational contributions that were initially underappreciated. The Blackwell GPU was named after statistician David Blackwell. The Hopper GPU before it was named after computer science pioneer Grace Hopper.

The Rubin platform succeeds Blackwell, which itself represented a major leap over the H100-era Hopper architecture. To understand the scale of where Nvidia is now, it helps to know that Blackwell was already considered the fastest AI computing platform available. Vera Rubin is positioned as 5x faster on inference workloads and 3.5x faster on training compared to Blackwell. Those are the claimed improvements with roughly 1.6x the transistor count, which means the efficiency gains per transistor are substantial rather than just being a raw scaling of silicon.

Six Chips, One Platform: What the Rubin Stack Actually Looks Like

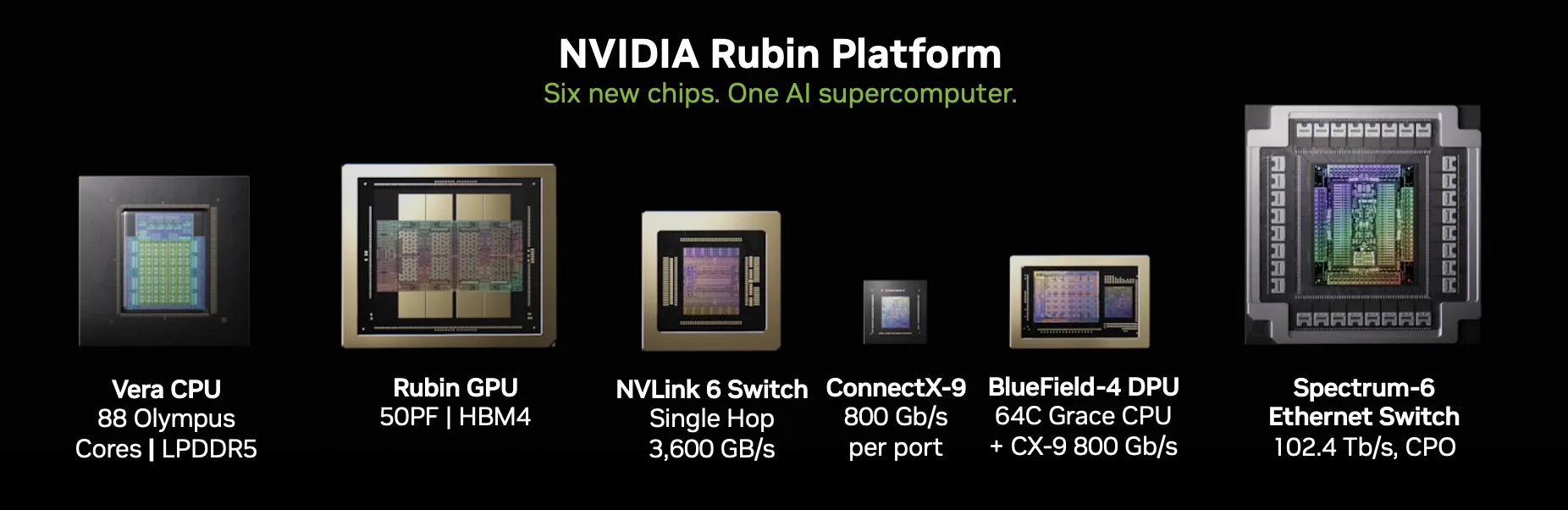

One of the things that has changed about how Nvidia describes its products is the shift from talking about individual GPUs to talking about platforms. The Vera Rubin platform is six chips designed together, not one chip sold alongside commoditized supporting hardware. Jensen Huang described this at CES as “extreme codesign,” meaning the chips were designed as an integrated system from the start rather than each team optimizing independently and then trying to make things work together afterward.

The six chips are the Vera CPU, the Rubin GPU, the NVLink 6 Switch, the ConnectX-9 SuperNIC, the BlueField-4 DPU, and the Spectrum-6 Ethernet Switch. Each of these handles a different part of the workload in a modern AI data center.

The Vera CPU is the successor to Nvidia’s Grace CPU and uses 88 Olympus cores based on Armv9 architecture. It comes with 128GB of GDDR7 memory and introduces what Nvidia calls Spatial Multi-Threading, where each thread has the full throughput of a single core rather than sharing it. Huang’s description of this at CES was that it gives the CPU the effective processing capacity of 176 cores despite having 88. The CPU story at GTC is expected to be significant because agentic AI systems, which orchestrate multiple AI models working together, are creating demand for CPU performance that the industry was not anticipating even eighteen months ago.

The Rubin GPU handles the actual neural network computation. It comes with 288GB of HBM4 memory, which is the latest generation of high-bandwidth memory, and posts 50 petaFLOPs of NVFP4 inference performance and 35 petaFLOPs of NVFP4 training performance. Those numbers are benchmarks using Nvidia’s own FP4 precision format, which is a compressed numerical representation that trades some accuracy for speed and memory efficiency.

The NVLink 6 Switch handles the interconnect between GPUs within a rack. This is the nervous system of the system, and the bandwidth it provides is what determines whether 72 GPUs in a rack can work together effectively or whether they spend most of their time waiting on data. NVLink 6 runs at 18TB/s of bandwidth between connected chips. To put that number in context: the data throughput that this switch handles per second is roughly equivalent to transmitting the entire text content of the English-language Wikipedia about 50,000 times over. The AI systems that Nvidia is building these platforms for are genuinely moving data at scales that are difficult to reason about intuitively.

The BlueField-4 DPU offloads storage, security, and networking tasks away from the CPU and GPU, freeing those chips to focus on AI computation rather than infrastructure overhead. The ConnectX-9 SuperNIC handles server networking with speeds up to 1.6 Terabits per second. The Spectrum-6 handles Ethernet switching across the data center. All of these work together as a designed system, which is the core of Nvidia’s argument that buying a full Rubin platform is different from assembling components from multiple vendors.

The Performance Numbers and What They Mean

The 5x inference improvement over Blackwell deserves some unpacking because inference is where most of the money is moving in AI right now. Training a model is a one-time or periodic expense. Inference is what happens every time someone sends a query to ChatGPT, generates an image, asks an AI assistant a question, or has a voice conversation with an AI system. As AI gets embedded into more products and more workflows, the volume of inference requests grows continuously. Microsoft, Google, Amazon, and Meta collectively serve hundreds of billions of inference requests per day, and that number grows every quarter as adoption increases.

A 5x improvement in inference performance means that the same query can be answered in one-fifth the time, or five times as many queries can be handled by the same hardware, or some combination of both. For the hyperscalers, this translates directly into either cost reduction on existing workloads or the ability to take on significantly more workload without proportionally more hardware. At the scale these companies operate, a 5x efficiency gain on inference is worth billions of dollars per year.

The 3.5x training improvement matters for a different reason. Training improvements enable researchers and companies to iterate on models faster, experiment with larger architectures, or train on more data in the same time window. With Blackwell already being used for the current generation of frontier models, Rubin’s training improvement means the next generation of models will be trained with substantially more compute available per dollar, which historically has been one of the most reliable predictors of capability improvement.

Quick fact: The Vera Rubin GPU delivers 5x inference performance over Blackwell while increasing transistor count by only 1.6x. That efficiency ratio is what Nvidia means when it talks about architectural improvements rather than just “more silicon.” Getting 5x the performance from 1.6x the transistors requires fundamental changes in how the chip processes data, not just making it bigger.

The Token Cost Story: Why 10x Cheaper Matters More Than 5x Faster

The most significant claim Jensen Huang made about Vera Rubin at CES 2026 was not the performance number. It was the cost number. He said Rubin would reduce the cost of generating AI tokens to roughly one-tenth of what it cost on the previous Blackwell generation. That claim, if it holds up in commercial deployment, is more consequential for the trajectory of the AI industry than any raw performance benchmark.

Token cost is the fundamental economic constraint on AI deployment right now. Every time you send a message to an AI assistant, every time a company uses an AI to process documents or analyze data, every automated workflow that calls a language model, these all incur token costs. Those costs are currently high enough that many useful AI applications are economically marginal or outright impractical at scale. A 10x reduction in token generation costs does not make AI incrementally more affordable. It crosses thresholds that change the economic calculation entirely for applications that are currently unviable.

The historical analogy is cloud computing economics in the early 2010s. When AWS dropped compute costs by large multiples through a combination of hardware improvements and scale, it did not just make existing applications cheaper. It made entirely new categories of applications feasible that nobody was building before because the infrastructure cost made the business math impossible. A 10x reduction in AI token costs is plausibly that kind of threshold change, where the applications that become viable are as important as the cost reduction itself.

There are meaningful caveats here. Jensen Huang’s cost claims at keynotes are typically for optimal workloads under controlled conditions. Real-world cost reductions depend on how the hardware is utilized, what precision format the model runs in, what the memory footprint of the workload is, and a range of operational factors that do not show up in benchmark numbers. The 10x claim should be understood as the potential ceiling under favorable conditions rather than a guaranteed multiplier across all deployments. But even a 3x to 5x real-world reduction in token costs, which is more conservative and probably closer to what organizations will see in production, would be a substantial shift in the economics of AI services.

Fanless, Cableless, Five-Minute Install: The Physical Design Changed Too

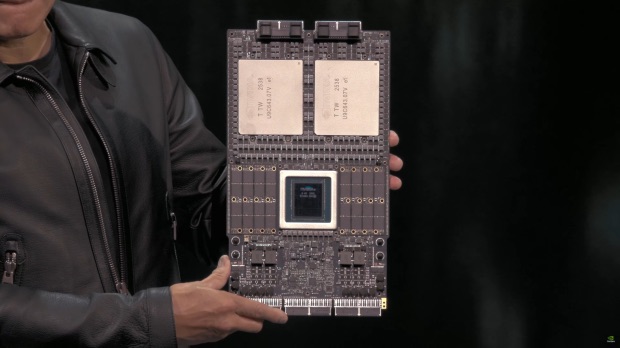

One of the less-covered details about Vera Rubin is that the physical design of the rack system changed significantly from previous generations. The Vera Rubin NVL72, which is the rack-scale system combining 72 Rubin GPUs in a single installation, is now fully liquid cooled with no fans, no tubes in the traditional sense, and no cables connecting components within the rack. The system is described as fanless, tubeless, and cableless, with a modular tray design that allows components to be swapped without the typical wiring complexity.

The practical consequence of this design is the installation time. Blackwell-based rack systems took approximately two hours to install in a data center. Vera Rubin NVL72 takes approximately five minutes. At the scale that hyperscalers are deploying these systems, counting thousands of racks across dozens of data centers, that difference in installation time is operationally significant. It is also relevant for maintenance and upgrades, where faster component swaps reduce downtime on expensive hardware.

The move to 100 percent liquid cooling is driven by heat density. As chips become more powerful they generate more heat per unit area. Air cooling becomes increasingly impractical when heat density exceeds certain thresholds, and Rubin’s performance levels would require impractically large and loud air cooling systems if that approach were maintained. Liquid cooling addresses this by directly removing heat from chip surfaces with far greater efficiency than moving air, but it requires different data center infrastructure than traditional air-cooled facilities. This is one of the reasons data center construction spending has been accelerating so dramatically alongside AI hardware investment. The physical infrastructure that supports these systems is being rebuilt from the ground up, not just upgraded.

Who Is Getting Vera Rubin First

Nvidia has confirmed that the first commercial Vera Rubin deployments will happen in the second half of 2026 across a specific list of cloud providers. On the hyperscaler side: AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure. On the AI-specialized cloud side: CoreWeave, Lambda, Nebius, and Nscale.

Microsoft’s deployment is notable because Nvidia specifically mentioned that Azure will deploy Vera Rubin NVL72 rack-scale systems at future Fairwater AI superfactory sites. Fairwater is Microsoft’s term for the largest-scale AI data center facilities in its expansion plan. The tightly optimized platform language from both companies suggests Microsoft and Nvidia have worked on the specific configuration that Azure will use, rather than Microsoft just ordering off a standard product sheet.

CoreWeave’s inclusion is strategically interesting. CoreWeave is the neocloud provider that has become one of the most important infrastructure players in AI by focusing specifically on GPU-dense computing for AI workloads. Nvidia also made a $2 billion investment in Nebius, one of the other launch partners, taking an 8.3 percent stake. Nvidia financing parts of the customer ecosystem that will deploy its hardware is a relatively new behavior that speaks to how seriously the company is treating the neocloud layer as a growth market.

For developers and organizations that want to use Vera Rubin without running their own data center infrastructure, the cloud provider list gives a rough picture of where access will be available. The specific instance types, pricing, and availability windows for each provider have not been announced yet and are likely to be part of what gets discussed at GTC 2026 over the next four days.

The “World-Surprising” Chip: What Jensen Huang Has Been Hinting At

Huang made a comment in an interview with South Korea’s Korea Economic Daily before GTC that he would be revealing “a chip that will surprise the world” at the event. He added that Nvidia has “a few new chips the world has never seen before.” Nvidia has not officially identified what these are, and any claim about specific unannounced products before the keynote would be speculation.

What is publicly known: analysts and industry reporters have focused on two possibilities. The first is a new inference-focused accelerator chip, possibly related to Nvidia’s reported $20 billion technology licensing deal with Groq. Groq built a chip architecture specifically optimized for ultra-low-latency inference, which is different from Nvidia’s strength in high-throughput training and batch inference. If the Groq technology is being integrated into a new Nvidia product, GTC is the logical venue to reveal it.

The second possibility that analysts have discussed is an early reveal of the Feynman architecture, the platform generation after Rubin that multiple industry sources have described as designed specifically for the reasoning and long-term memory requirements of agentic AI systems. Whether Feynman is at a stage where it would be revealed publicly at GTC 2026 is unknown. Nvidia revealed Vera Rubin at GTC 2025 when it was still a year-plus away from production, so a similar reveal of Feynman timing is consistent with how they have managed their roadmap communications.

What I will say is that “world-surprising” is a high bar for a company that has already shipped 5x performance improvements and 10x token cost reductions. Either Huang is engaging in his characteristic showmanship and the actual announcement is impressive but not literally surprising, or there is something genuinely different coming that goes beyond the expected Rubin-and-roadmap narrative. The answer is roughly 18 hours away at this point.

The Competition Is Closer Than It Was a Year Ago

Any honest assessment of where Nvidia stands going into GTC 2026 has to acknowledge that the competitive landscape has shifted. Nvidia still commands an estimated 70 to 80 percent share of the AI accelerator market and its technology lead remains real. But the distance between Nvidia and the alternatives has closed relative to where it was during the H100 era.

AMD’s CDNA 4-based MI400 series is genuinely competitive on certain training workloads in a way that AMD’s previous attempts were not. Google’s TPU v6 continues to improve and Google has been talking to other cloud providers including Meta about licensing access to its custom silicon. Amazon’s Trainium 2 is deployed at scale within AWS and is being used for training workloads that previously would have defaulted to Nvidia GPUs. Anthropic, which is backed by both Google and Amazon, has confirmed that its Claude models run on Google TPUs and Amazon Trainium, not exclusively on Nvidia hardware.

None of this means Nvidia is about to lose its dominant position. The software ecosystem around CUDA, which is Nvidia’s programming model for GPU computing, represents a decade-plus of accumulated developer tools, libraries, optimized kernels, and trained engineers that competitors cannot simply replicate by building competitive hardware. Most AI researchers and developers know CUDA and have workflows built around it. Switching to AMD’s ROCm platform or Google’s JAX-based TPU stack requires real effort and not all organizations have the engineering bandwidth to do it.

But the era of Nvidia having essentially no credible competition on any dimension is over. At GTC 2026, Huang is presenting to an audience that now has real alternatives and knows it. The announcements tomorrow will be evaluated not just against what Nvidia did before but against what AMD, Google, Amazon, and others are deploying right now. That competitive pressure has historically been good for the industry and it is probably part of why the GTC 2026 announcements are expected to be more aggressive than a typical roadmap update.

What to Actually Watch For in Tomorrow’s Keynote

The keynote starts at 11am Pacific on March 16 and can be watched live on Nvidia’s GTC website. It is typically two hours. Here is what is worth paying specific attention to beyond the obvious Vera Rubin product details that will be covered heavily everywhere else.

Watch how Huang frames the agentic AI story. He mentioned “agentic AI” more than a dozen times on Nvidia’s most recent earnings call and said the generation of tokens for agentic workflows is growing exponentially. The architecture decisions in Vera Rubin, particularly around the Vera CPU’s role in orchestrating agent workflows and the NVLink interconnect that lets GPUs communicate at 18TB/s, are designed with this in mind. The keynote framing of why these architectural choices matter for the specific kind of AI workloads coming next is probably more useful for understanding Nvidia’s strategic thinking than the benchmark numbers themselves.

Watch for anything about software. Nvidia’s competitive moat is not primarily its hardware anymore. It is CUDA, NIM microservices, the Omniverse platform, and the accumulation of optimized AI frameworks that make its hardware more accessible than alternatives. Any announcements about software tools, particularly ones that make it easier to run agentic AI workflows on Nvidia hardware, are worth taking seriously as competitive signals.

Watch the inference chip situation. If Huang reveals something related to the Groq technology deal or a new inference-specific chip, the framing will matter. Nvidia’s current strength is in high-throughput batch inference. If the new product addresses low-latency single-request inference, it fills a genuine gap in Nvidia’s product line that competitors have been trying to occupy.

And pay attention to what does not get mentioned. The absence of a clear Feynman reveal, or a conspicuous lack of detail on a specific product category, often tells you as much about where a company’s roadmap is actually running as the things that do get announced.

I will be following the keynote live tomorrow and will update CyberDevHub with a post-keynote breakdown once we know what the “world-surprising” chip actually is. If you have thoughts on what you think Huang is going to announce, drop them in the comments before the keynote. It is always interesting to see what the community is expecting versus what actually ships.

References (March 15, 2026, pre-keynote):

Nvidia Vera Rubin platform official announcement: nvidianews.nvidia.com

Nvidia CES 2026 keynote blog (Jensen Huang, full Rubin details): blogs.nvidia.com

Vera Rubin NVL72 specs and production confirmation: Tom’s Hardware, January 6, 2026

GTC 2026 keynote details and CPU story: CNBC, March 13, 2026: cnbc.com

“World-surprising” chip tease: VideoCardz, reporting on Korea Economic Daily interview

How to watch the GTC 2026 keynote (March 16, 11am PT): TechCrunch

Nvidia Nebius investment ($2B, 8.3% stake): Bloomberg, March 2026

Vera Rubin named after astronomer Vera Florence Cooper Rubin: Nvidia Newsroom

Jensen Huang has a pattern of delivering on the hype.

In roughly 18 hours we find out what “world-surprising” actually means this time.

Leave a Reply