Sources: OpenClaw official blog (openclaws.io), VentureBeat, Medium/Aftab, Medium/ThamizhElango, Particula.tech security analysis, Winbuzzer, AI Magicx, StartupNews.fyi, Microsoft Security blog, AWS Lightsail announcement. All facts confirmed from multiple independent sources.

Table of Contents

- One Hour of Code That Beat React’s Decade-Long GitHub Record

- What OpenClaw Actually Does That Nobody Else Was Doing

- The Naming Chaos: Clawdbot, Moltbot, OpenClaw, and a Cease-and-Desist

- The Numbers That Made the Industry Stop and Stare

- Why OpenAI Hired Its Creator and Meta Acquired the Company in the Same Month

- Why China Is Telling Its Developers to “Raise a Lobster”

- The Security Crisis: 512 Vulnerabilities, 824 Malicious Skills, One Very Bad Week

- Microsoft’s Warning: Do Not Run This on Your Work Computer

- The Bigger Picture: What OpenClaw Tells Us About Where AI Is Actually Going

- Should You Use It? An Honest Assessment

One Hour of Code That Beat React’s Decade-Long GitHub Record

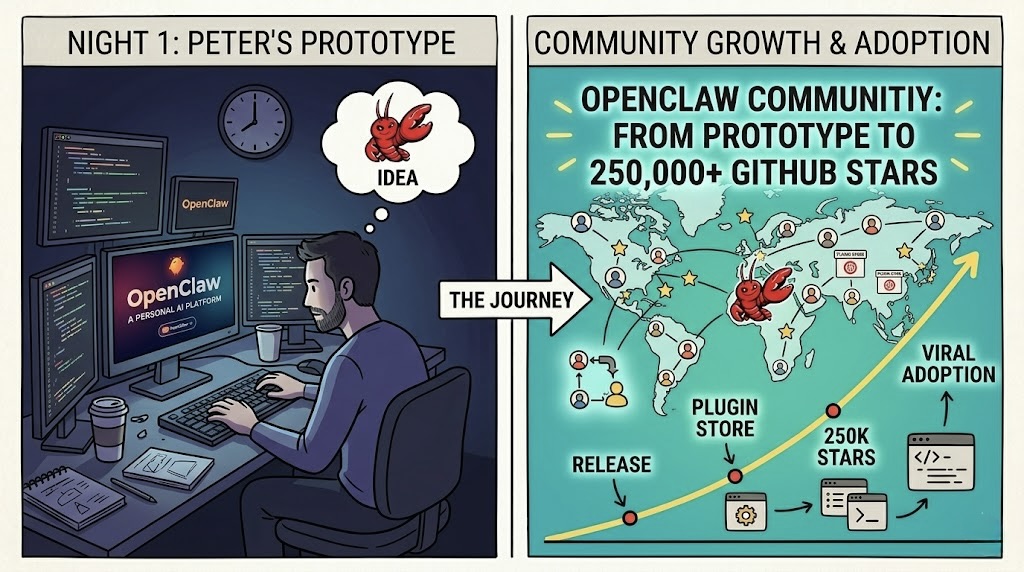

In November 2025, an Austrian software developer named Peter Steinberger sat down and spent roughly one hour building something he personally wanted: a local AI agent he could talk to through WhatsApp. Not a chatbot. Not another web interface. An autonomous agent that could receive a message, decide what tools to use, execute multi-step tasks across the internet, and report back through the messaging app he already used every day. He looked for something like this. It did not exist. So he built it.

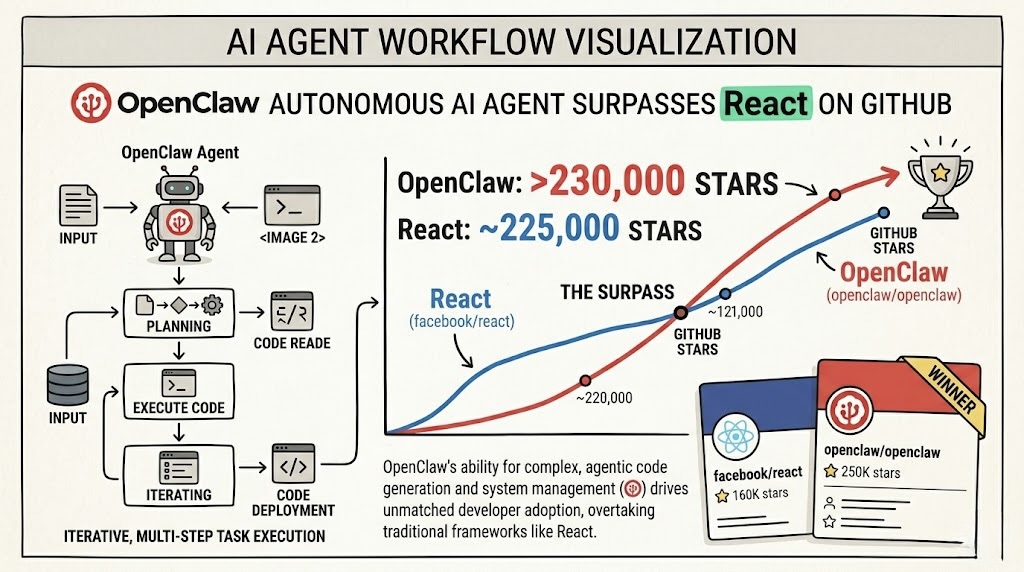

He pushed it to GitHub under the name Clawdbot, described it to a few friends, and did not think much more about it. Three months later, on March 3, 2026, his project crossed 250,829 GitHub stars, surpassing React, the JavaScript framework that powers most of the modern web, which had taken over a decade to reach the same milestone. OpenClaw, as it had been renamed by then, became the fastest-growing open-source project in GitHub history. The Linux kernel accumulated its 195,000 stars over more than 30 years. OpenClaw got there in 60 days.

Sam Altman called Steinberger “a genius with a lot of amazing ideas about the future of very smart agents” and hired him at OpenAI. Meta separately acquired Moltbook, the commercial company built around OpenClaw, in a deal reported at around $2 billion. Bitdefender then disclosed that 20 percent of the skills available in OpenClaw’s marketplace were malicious. Microsoft published a blog post saying the software should be treated as “untrusted code execution” and explicitly told users not to run it on their work computers. The Chinese government started comparing it to raising a lobster.

It has been quite a few months.

What OpenClaw Actually Does That Nobody Else Was Doing

The concept of autonomous AI agents is not new. AutoGPT went viral in 2023 for roughly the same reason: here is an AI that does things rather than just answering questions. AutoGPT largely faded because the execution fell short of the concept. The agents ran too slowly, made too many mistakes, and required too much prompt engineering to do anything reliably useful.

OpenClaw is different in a specific way that explains the adoption. Steinberger’s insight was to route the agent through messaging platforms that people already live in. WhatsApp, Telegram, Slack, Discord, iMessage. Instead of opening a separate app or web interface to interact with your AI agent, you text it. The same way you text a friend. The agent is just always there in your existing messaging infrastructure, available to receive tasks whenever you think of them.

The technical implementation combines tool access with sandboxed code execution, persistent memory across conversations, and a skills system where additional capabilities can be installed from a marketplace called ClawHub. A skill is a packaged automation: manage email inbox, monitor price drops, trade stocks, coordinate schedules, generate reports. You install the skill, authorize the agent to access the relevant accounts, and the agent can then execute that task type without needing step-by-step instructions each time. The agent does not just respond to prompts. It takes actions in the world on your behalf, using real credentials, in real systems, with real consequences.

That last sentence is important. The “real consequences” part is what makes OpenClaw genuinely useful and also what makes the security problems that emerged later so serious. An agent that can do things is an agent that can do the wrong things. Both sides of that coin are visible in everything that has happened to OpenClaw in the last three months.

The Naming Chaos: Clawdbot, Moltbot, OpenClaw, and a Cease-and-Desist

The naming history of this project is chaotic enough to deserve its own section because it is not just amusing backstory. It reveals a lot about the competitive dynamics of the AI industry right now and specifically about how Anthropic handled what might have been its biggest organic community win in 2025.

Steinberger named the original project Clawdbot, a portmanteau that nodded to Anthropic’s Claude model, which he and most early users were running under the hood. The name was a natural fit. The project was originally built to work with Claude, the community grew up around Claude, and the branding reflected that relationship. Steinberger was building on top of Anthropic’s platform and publicly crediting it.

Anthropic’s response was to send a cease-and-desist letter. According to VentureBeat’s reporting, the company gave Steinberger a matter of days to rename the project and sever any association with Claude, or face legal action. Anthropic even refused to allow old domains to redirect to the renamed project. The security concerns about how users were running agents with root access on unsecured machines were real, and Anthropic’s liability concerns were not without merit. But the execution of cutting off the most viral agent project in recent memory, one that was organically driving Claude adoption, through a legal threat rather than a conversation, is the kind of decision that industry observers are still shaking their heads at.

Steinberger renamed the project Moltbot. Then, on January 30, 2026, he renamed it again to OpenClaw, landing on a name that captured both the open-source nature and the agent-grabbing-things visual metaphor. The naming changes, counterintuitively, generated more attention rather than less. Each rename brought a fresh wave of Hacker News posts, Reddit discussions, and new contributors discovering the project. The developer who had been pushed away from one AI company’s branding ended up building the project that defined the next generation of AI agents, and doing it primarily on top of competing models.

The timeline: November 2025: Clawdbot launched on GitHub. Late January 2026: Goes viral, crosses 100,000 stars in under two weeks. January 30: Renamed to OpenClaw. February 14: Steinberger joins OpenAI. March 3: Crosses 250,829 stars, surpassing React. March 10: Moltbook (commercial entity) acquired by Meta. March 15 area: Bitdefender discloses 824+ malicious ClawHub skills. All of this in under four months.

The Numbers That Made the Industry Stop and Stare

GitHub star counts are a proxy metric with known limitations. Stars do not equal active users. Stars do not equal code quality. Stars do not equal long-term viability. All of that is true and the numbers are still staggering enough to be worth stating plainly.

OpenClaw accumulated 9,000 stars on launch day in late January 2026. 60,000 stars three days after that. 190,000 stars within two weeks. 250,829 stars by March 3. For context: Kubernetes, which runs most of the world’s production cloud infrastructure, has 120,000 stars after nearly a decade. The Linux kernel has 195,000 after more than 30 years. React had held the top spot for years. OpenClaw lapped all of them in two months.

Within weeks of the viral explosion, 2 million people were using it every week according to reporting from CodeX. The ClawHub skills marketplace grew to over 4,000 skills within the same period. Contributors from across the world were shipping code daily, and the project had over 1,000 active contributors. China’s developer community became so enthusiastic about it that the phrase “raise a lobster,” a translation of the Chinese slang for deploying an OpenClaw agent, became a cultural shorthand in Chinese tech circles for getting into autonomous AI agents. Fortune covered it under the headline “‘Raise a lobster’: How OpenClaw is the latest craze transforming China’s AI sector.”

The community numbers tell a parallel story. OpenClaw-related subreddits and Discord servers grew faster than almost any developer tool community before them. Tutorials, YouTube walkthroughs, and third-party skill developers emerged organically without any marketing from Steinberger or the foundation. The momentum was entirely community-driven in a way that most open-source projects spend years trying to create and most never achieve.

Why OpenAI Hired Its Creator and Meta Acquired the Company in the Same Month

Steinberger joined OpenAI on February 14, 2026. His stated mission there: “build an agent that even my mum can use.” Sam Altman confirmed the hire publicly. The structure of the arrangement is notable: OpenClaw itself transitioned to an independent 501(c)(3) foundation, OpenAI is sponsoring the project but does not own the code, and the MIT license remains in place. Steinberger went to OpenAI to work on personal agents specifically. The open-source project he built is governed by the community, not by his new employer.

Separately, Moltbook, which was the commercial company Steinberger had built around OpenClaw before joining OpenAI, was acquired by Meta in March 2026 for a reported $2 billion. Meta had already acquired Manus AI, a competing autonomous agent system, in December 2025. The two acquisitions together give Meta a significant portfolio of autonomous agent technology at a time when Zuckerberg has been publicly describing superintelligence as his primary strategic goal.

VentureBeat’s analysis of the OpenAI acquisition framed it sharply: this was OpenAI acknowledging that community-built tools were outpacing their billion-dollar research labs on the interface layer that actually mattered to users. OpenAI had previously built their own Agents API, Agents SDK, and the Atlas agentic browser. None of them generated the adoption that a single developer’s weekend project achieved in four months. Hiring Steinberger was partly acquiring talent and partly acknowledging which mental model of AI agents was winning with real users.

The meta-story here is about where value is being created in the AI stack. The underlying models, GPT-5, Claude, Gemini, are extraordinary technical achievements built by hundreds of researchers over years with billions in compute investment. The interface layer that actually got two million people using AI agents every week was built by one person in one hour and spread through word of mouth. The interface ate the value in mobile. The same dynamic appears to be playing out in AI.

Why China Is Telling Its Developers to “Raise a Lobster”

The Chinese developer community’s adoption of OpenClaw has been rapid enough that it generated its own cultural moment. “Raise a lobster” became the phrase for deploying an OpenClaw agent, derived from the claw imagery in the name and the idea of maintaining an autonomous digital creature that works on your behalf. The phrase spread across Weibo, WeChat groups, and Chinese developer forums in the same way “vibe coding” spread in Western developer communities earlier in 2026.

The practical driver of Chinese adoption is straightforward. OpenClaw supports multiple underlying AI models including Claude, ChatGPT, Gemini, Grok, and open-source models that can be run locally. Chinese developers who cannot easily access some Western AI services can run OpenClaw on local models or on Chinese AI services. The platform’s model-agnostic architecture was a deliberate design choice by Steinberger, and it turned out to be one of the most important decisions for international adoption.

The Chinese government’s Ministry of Industry and Information Technology subsequently issued security advisories recommending that enterprises audit all OpenClaw deployments after the vulnerability disclosures. The trajectory from viral developer craze to government security advisory in the span of weeks is a compressed version of the cycle that every powerful autonomous tool goes through, and China moved through it faster than most jurisdictions did.

The Security Crisis: 512 Vulnerabilities, 824 Malicious Skills, One Very Bad Week

The security problems with OpenClaw were visible before the project went mainstream. Researcher Mav Levin publicly disclosed CVE-2026-25253 on January 26, 2026, the same day Interlock ransomware began exploiting the Cisco FMC zero-day that Amazon disclosed last week. CVE-2026-25253 has a CVSS score of 8.8, indicating a high-severity vulnerability. The attack is mechanically elegant: OpenClaw’s Gateway Control UI accepts a gatewayUrl parameter from the query string and automatically establishes a WebSocket connection to it without any user prompt. An attacker crafts a malicious URL, tricks a user into clicking it, the browser silently sends the victim’s authentication token to the attacker’s server, and full remote code execution follows.

By the time that vulnerability was patched in version 2026.1.29 on January 30, independent researchers had verified that 17,500-plus internet-exposed OpenClaw instances were vulnerable. 93.4 percent of exposed instances had authentication bypass vulnerabilities according to the same research. And CVE-2026-25253 was not the only problem. Security researchers collectively identified 512 total vulnerabilities across the platform, with 8 rated critical or severe, including remote command execution, command injection, SSRF, Discord privilege escalation, and webhook path traversal. A project with 250,000 GitHub stars and 2 million weekly users had more critical vulnerabilities than most mature enterprise software products.

The ClawHub skills marketplace problem is in some ways more alarming than the platform vulnerabilities. Skills are installable automation packages that run inside your OpenClaw instance with access to your accounts and credentials. Bitdefender’s security team analyzed the ClawHub registry and found that 824 skills, approximately 20 percent of the total, were malicious. The primary payload was AMOS, an infostealer that specifically targets macOS systems and attempts to exfiltrate cryptocurrency wallet data, browser saved passwords, and session cookies. A user who installed what appeared to be a productivity skill to manage their email inbox or track stock prices could be silently installing malware with access to every account their OpenClaw instance was connected to.

The supply chain attack surface here is substantial. OpenClaw’s entire value proposition depends on connecting the agent to real accounts with real credentials. An email management skill needs your email credentials. A calendar skill needs your calendar access. A stock trading skill needs your brokerage credentials. A malicious skill installed from ClawHub has all of those permissions the moment it is installed. The same architectural design that made OpenClaw powerful is what made the malicious skill attack so effective.

A Meta executive publicly reported that her agent wiped her entire email account. The issue was not a malicious skill in that case but a misconfigured legitimate one given excessive permissions. When an agent can execute destructive actions with real credentials, mistakes and malicious skills have equivalent consequences from the user’s perspective.

Microsoft’s Warning: Do Not Run This on Your Work Computer

On February 19, 2026, Microsoft published a blog post titled “Running OpenClaw Safely: Identity, Isolation, and Runtime Risk.” The key statement was unambiguous in a way that security vendor communications rarely are: OpenClaw “should be treated as untrusted code execution with persistent credentials” and is “not appropriate to run on a standard personal or enterprise workstation.”

Microsoft’s recommended deployment architecture requires full isolation: a dedicated virtual machine with a non-privileged account, network segmentation that limits what the agent can reach, access restricted to non-sensitive data only, and allowlist-only skill installation from vetted sources rather than the general ClawHub marketplace. Running OpenClaw on your daily-use machine, the machine where you are also logged into your bank, your corporate email, and your development tools, is specifically what Microsoft says not to do.

AWS responded to the security concerns by launching Managed OpenClaw on Lightsail, a one-click deployment blueprint that pre-configures an isolated environment with Amazon Bedrock as the default AI backend, automated IAM role creation with least-privilege permissions, and the security isolation that the Microsoft guidance recommends. The AWS service is a direct acknowledgment that self-hosted OpenClaw deployments as most users were running them were too dangerous for most teams to configure securely without dedicated security expertise.

The security research community’s assessment was summarized clearly by researcher John Hammond: “Speaking frankly, I would realistically tell any normal layman, don’t use it right now.” Andrej Karpathy, who expressed enthusiastic support for the project from an innovation perspective, was essentially measuring OpenClaw against the standard of “what this enables when configured correctly.” Hammond was measuring it against the standard of “what happens when real users with limited security expertise install and use it as designed.” Both assessments are accurate and they describe different things.

The Bigger Picture: What OpenClaw Tells Us About Where AI Is Actually Going

Every major AI development story in early 2026 has been about models getting better and benchmarks improving. OpenClaw is a different kind of signal. It is about what users actually want to do with AI once the underlying capability exists.

The chatbot paradigm that dominated AI interfaces since 2022 is a question-and-answer model. You ask, the AI answers. The session ends. The next time you open the app, the AI has no memory of what came before unless you explicitly provide context. OpenClaw represents a different paradigm: the agent is always present, has persistent memory, acts autonomously, and operates in the same communication channels where the rest of your life happens. You do not go to the AI. The AI lives where you already are.

That shift, from AI as a destination to AI as a background participant in your existing digital life, is what VentureBeat described as the beginning of the end of the ChatGPT era. The chatbot era may not actually be ending; ChatGPT has 900 million weekly active users and is growing. But OpenClaw’s two million weekly users at launch, before any marketing, built entirely on community word of mouth, demonstrated that people are ready for a different relationship with AI than the chatbot interface offers. The fact that OpenAI hired its creator to work on personal agents suggests OpenAI thinks so too.

The security crisis that followed the viral growth is not a reason to dismiss the concept. It is a reason to take seriously how difficult it is to build trustworthy autonomous systems. An agent that can take real actions in the world on your behalf is enormously more useful than one that can only answer questions. It is also enormously more dangerous when it goes wrong, whether from bugs, malicious skills, or misconfiguration. The engineering challenge of building autonomous agents that are both powerful and safe is not solved by enthusiasm and GitHub stars. It requires the same careful thinking about threat models, blast radius, and recovery paths that serious security engineering has always required.

The race to build the “safe enterprise version of OpenClaw” is now the central question facing every major AI platform vendor. AWS started it with Managed OpenClaw. OpenAI is working on it with Steinberger. The answer to that question, whoever gets there first with something that actually works for enterprise security requirements without sacrificing the usefulness that made OpenClaw viral, will be one of the most significant AI products of the next two years.

Should You Use It? An Honest Assessment

The honest answer in March 2026 is: probably not on your daily-use machine, not from the general ClawHub marketplace, and not without understanding what you are giving it access to. That answer applies to most users. It does not mean the project is worthless or that the concept is flawed. It means the current state of the software reflects the fact that it was built by one person in a weekend, went viral before it was hardened, and attracted a security research community that found the problems in public rather than quietly.

If you want to experiment with it because you are a developer curious about autonomous agents and you have the technical background to understand what you are running: set it up in a dedicated virtual machine, connect it only to throwaway accounts or accounts that contain nothing sensitive, install no skills from ClawHub until you have read the source code of each skill you install, and treat the experiment as research rather than a daily productivity tool. Follow the Microsoft isolation guidance. That configuration makes the security risks manageable for a technically sophisticated user.

If you are a developer building something on top of OpenClaw or a similar agent framework: the security lessons from OpenClaw’s disclosure cluster are worth studying in detail before you ship anything to users. The 512 vulnerabilities found across the platform are not exotic edge cases. They are the predictable consequences of building an autonomous system that executes user-supplied code, accepts input from untrusted channels, and runs with credentials that authorize it to take real-world actions. Getting that security architecture right from the beginning is significantly easier than retrofitting it after your users have already connected their real accounts.

For everyone else: keep an eye on how the AWS Managed OpenClaw and OpenAI’s work with Steinberger develops over the next six months. The managed, isolated, security-reviewed version of this idea is going to exist and it is going to be genuinely useful. The question is what it looks like when the rough edges are addressed and who builds it well enough for non-technical users to trust it with their actual accounts and data.

The concept is not wrong. An autonomous agent that lives in your existing messaging apps, can take actions on your behalf, and gets smarter as it learns your patterns is exactly the AI interface most people would prefer over a chatbot interface. Getting from here to there safely is the engineering problem of the moment.

Have you tried OpenClaw or a similar agent framework? What use case did you want it for and did the reality match the expectation? Drop it in the comments. The gap between what the demos show and what actually works reliably in daily use is where the most useful information lives right now.

References (March 19, 2026):

OpenClaw official 250K stars blog post: openclaws.io/blog

VentureBeat: “OpenAI’s acquisition of OpenClaw signals the beginning of the end of the ChatGPT era”: venturebeat.com

Particula.tech: “OpenClaw Hit 250K GitHub Stars, 20% of Its Skills Were Found Malicious” (CVE-2026-25253, 512 vulns, 824 malicious skills, AMOS infostealer): particula.tech

Medium/Aftab: “OpenClaw Just Beat React’s 10-Year GitHub Record in 60 Days. Now Nobody Knows What to Do With It.”: medium.com

Medium/ThamizhElango: “OpenClaw: The Lobster That Took Over the AI World”: medium.com

AI Magicx: “How One Developer Built a Viral AI Company in One Hour” (Steinberger joining OpenAI Feb 14): aimagicx.com

a16z: “Top 100 Gen AI Consumer Apps: March 2026” (OpenClaw acquired by OpenAI, Manus acquired by Meta): a16z.news

Fortune: “‘Raise a lobster’: How OpenClaw is the latest craze transforming China’s AI sector”: fortune.com

Microsoft Security blog: “Running OpenClaw Safely: Identity, Isolation, and Runtime Risk” (February 19, 2026)

Winbuzzer: “OpenClaw Overtakes React as GitHub’s Most-Starred Project” (star counts, CVE-2026-25253 patch): winbuzzer.com

One developer. One weekend. The fastest-growing open-source project in history.

It turns out what people actually wanted from AI was an agent, not a chatbot.

Leave a Reply