Table of Contents

- Your Network Has Been Lying to You

- The Castle Model and Why It Stopped Working

- What Zero Trust Actually Means, Without the Marketing Language

- The Five Things Zero Trust Actually Checks

- Why 65% of Organizations Are Replacing Their VPNs Right Now

- The Lateral Movement Problem: What Happens After the Attacker Gets In

- Micro-Segmentation: The Technique That Stops Attackers from Spreading

- SASE: When Zero Trust Meets the Cloud

- The Real Numbers: What Zero Trust Actually Delivers

- The Parts Nobody Warns You About

- Where to Start If Your Organization Is Still Running on Implicit Trust

Your Network Has Been Lying to You

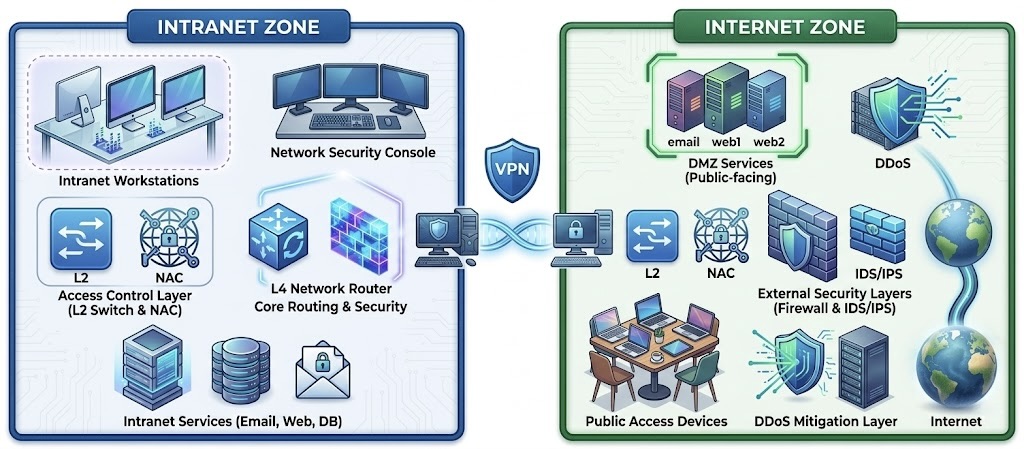

For most of the history of enterprise networking, security worked on a simple mental model: outside is dangerous, inside is safe. You built a perimeter, put a firewall on the edge, made sure only the right people could get through the gate, and then assumed that anything already inside the network could be trusted. VPNs extended that model to remote workers. You connect to the VPN, you are effectively inside the building, and the network treats you like you belong.

That model made sense when everyone worked in an office, software ran on servers in a room down the hall, and the number of devices on the corporate network was small and manageable. It does not make sense in 2026, and it has not made sense for a while. The problem is that an enormous amount of security infrastructure was built on the assumption that it is still true, and attackers figured out years ago that it is not.

Zero Trust is the architecture that replaces the inside/outside model with something more honest about how networks actually work. The name captures the core principle: trust nobody by default. Not the device that connected through the VPN. Not the employee account that authenticated this morning. Not the server that has been running in the same rack for three years. Every access request gets verified, every time, regardless of where it comes from. That sounds extreme until you understand why the alternative has been failing so consistently.

The Castle Model and Why It Stopped Working

The security researchers who developed Zero Trust in the late 2000s, primarily John Kindervag at Forrester Research, used the term “castle and moat” to describe what was being replaced. The castle is your corporate network. The moat is your perimeter firewall. The drawbridge is your VPN. The model assumes that anyone who makes it across the moat is authorized to be in the castle and can move around freely once inside.

The first problem with this model is the perimeter itself. In 2026 the corporate perimeter is not a physical location. Employees access corporate applications from home networks, coffee shops, airports, and hotel rooms. Applications run in AWS, Azure, and Google Cloud rather than on servers in the office. SaaS tools like Salesforce, Slack, and Microsoft 365 hold enormous amounts of sensitive corporate data on third-party infrastructure. The concept of “inside the network” as a coherent security boundary has largely dissolved. The perimeter is everywhere and nowhere simultaneously, which makes defending it in the traditional sense effectively impossible.

The second problem is what happens when the model fails. When an attacker gets past the perimeter, through a phishing attack, a compromised credential, a VPN vulnerability, or any of the other methods that work reliably against perimeter defenses, they are immediately inside a network that was designed to trust everything it contains. The attacker can move from system to system, escalate privileges, access databases, exfiltrate data, and install backdoors, all while the security tools are watching the perimeter and seeing nothing unusual on the inside. This is called lateral movement, and it is how almost every major enterprise breach in the last decade has been executed.

The breach statistics are consistent and uncomfortable. In a 2025 survey by Zscaler of more than 600 IT and security professionals, 56 percent reported experiencing VPN-exploited breaches in the previous year. VPN vulnerabilities tracked by security researchers grew 82.5 percent over the same period, with roughly 60 percent of those vulnerabilities rated high or critical severity. The castle model’s drawbridge is actively being attacked at scale, and the vendors maintaining it cannot patch vulnerabilities fast enough to keep up.

What Zero Trust Actually Means, Without the Marketing Language

Zero Trust has been marketed so aggressively by so many vendors over the last several years that the term has accumulated a thick layer of buzzword residue. Practically every security product sold today claims to be a Zero Trust solution. Most of them are not, or are only partially so, and the gap between the marketing claim and the actual architecture matters for understanding whether a given product actually solves the problem.

Stripped of marketing language, Zero Trust is a security model built on three core assumptions. First, assume the network is already compromised. Do not wait for evidence of a breach to start verifying identities and restricting access. Design the system as if attackers are already inside, because statistically they often are. Second, verify explicitly. Every access request, from every user, from every device, to every resource, should be authenticated and authorized based on all available data at the time of the request: identity, device health, location, time of access, and what is being accessed. Third, use least privilege access. Grant access only to the specific resources a user needs for their specific task, for the minimum time required. Not access to a whole network segment. Not broad database permissions. The exact permission needed for the exact request being made, and nothing more.

Those three principles are the substance of Zero Trust. The specific products, protocols, and architectures that implement them are many and varied. But any security approach that does not embody all three principles is not genuinely Zero Trust regardless of what the vendor calls it. An organization that adds multi-factor authentication to its VPN and calls the result Zero Trust has improved its security posture somewhat and changed its marketing language considerably. It has not changed its fundamental architecture.

The Five Things Zero Trust Actually Checks

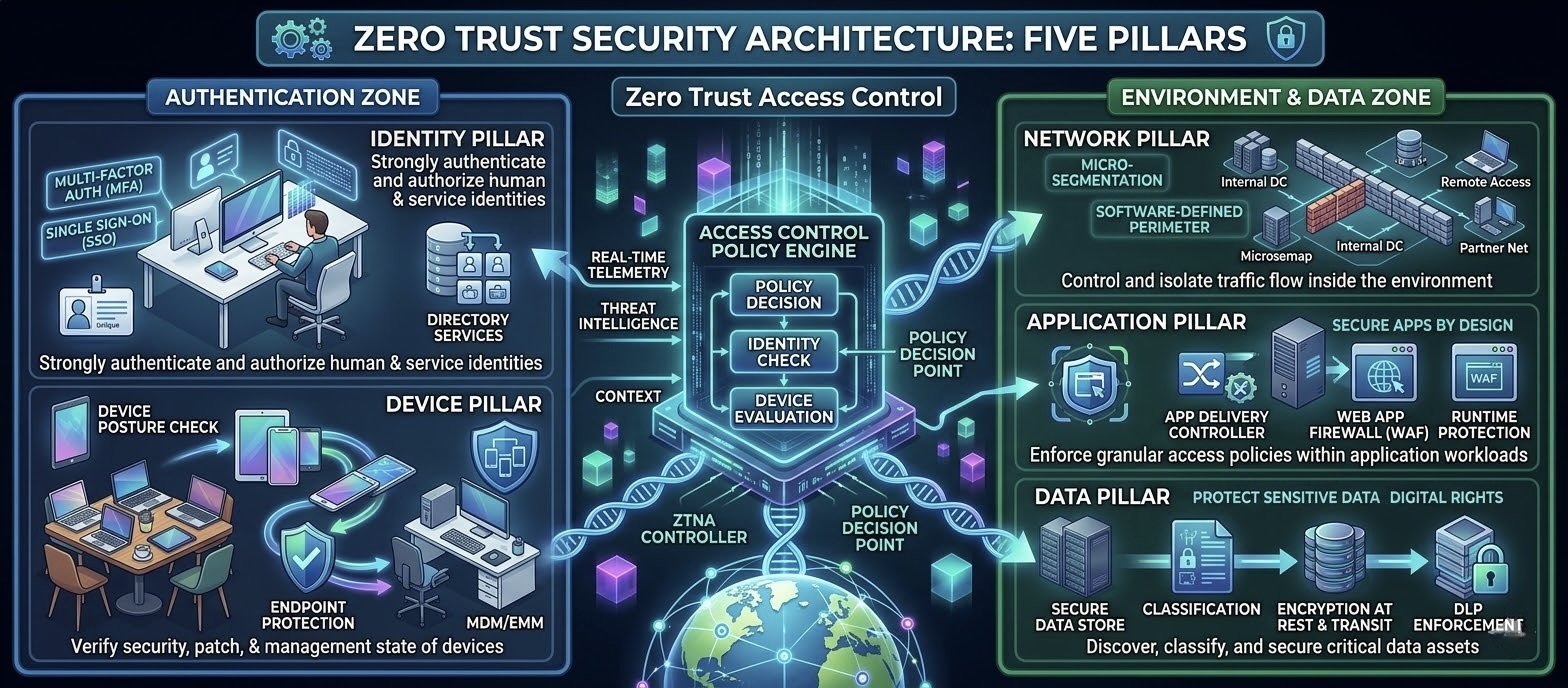

NIST, the US National Institute of Standards and Technology, published the definitive technical specification for Zero Trust Architecture in its Special Publication 800-207 in 2020. The framework identifies five interdependent components that together constitute a genuine Zero Trust implementation. Understanding them is more useful than understanding vendor product categories, because the vendor landscape shifts constantly while the underlying components stay stable.

Identity is the foundation. Zero Trust starts with knowing who or what is making an access request. This means strong authentication, typically multi-factor authentication as the minimum, for every user. In 2026, multi-factor authentication accounts for over 87 percent of authentication deployments in Zero Trust architectures according to Grand View Research. But identity in Zero Trust goes beyond passwords and authenticator apps. It includes continuous authentication, where the system periodically re-verifies that the authenticated session is still being used by the same person through behavioral signals, rather than trusting that a session that was valid at 9am is still valid at 3pm.

Device health is the second check. A valid user credential on a compromised device is not a trustworthy access request. Zero Trust policies typically check device compliance status before granting access: is the operating system patched? Is the endpoint security software running and up to date? Has the device been registered and managed by the organization? An employee whose laptop has been infected with malware should not be able to access corporate systems just because their credentials are valid. Device health verification catches this.

Network context provides additional signal for access decisions. The same user requesting access from their registered work laptop on a known corporate network is a different risk profile from the same user requesting access from an unrecognized IP address in a country they have never worked from before. Zero Trust systems use this context to adjust access decisions dynamically, not to block legitimate travel but to require additional verification when the context suggests higher risk.

Application authorization is where least privilege becomes concrete. Rather than granting access to a network segment where many applications live, Zero Trust grants access to a specific application or resource for a specific session. A marketing employee who needs to update a website does not get access to the database server that lives in the same network segment as the content management system. The access policy is defined at the application level, not the network level.

Data classification completes the picture by adding the sensitivity of what is being accessed as a factor in access decisions. Access to a public marketing document is a different risk level than access to the employee payroll database. Zero Trust architectures classify data and apply more stringent verification requirements for more sensitive assets. Accessing source code repositories, customer databases, or financial records may require stronger authentication, device compliance checks, and session recording that routine file access does not.

Why 65% of Organizations Are Replacing Their VPNs Right Now

In the Zscaler ThreatLabz 2025 survey, 65 percent of organizations stated plans to replace VPN services within the year, a 23 percent increase from the previous year’s survey. That acceleration is worth paying attention to because it reflects a shift from “VPNs have some problems we are aware of” to “VPNs are a liability we need to eliminate.”

The security argument against VPNs in a Zero Trust context is structural rather than about any specific vulnerability. When a VPN grants network access, it grants broad network access. The employee who connects through VPN to access the project management tool also has, at the network layer, a path to the database servers, the internal HR systems, the code repositories, and anything else connected to the same network. The only thing preventing them from accessing all of it is application-level access controls, which vary in quality and completeness across different systems. An attacker who compromises a VPN user’s credentials inherits all of that implicit network access simultaneously.

Zero Trust Network Access, usually called ZTNA, is the architectural replacement for VPN. Instead of creating a network tunnel that puts the device inside the corporate network, ZTNA creates an application-specific connection that gives access only to the specific application the user needs at that moment. The device never touches the corporate network directly. It connects to a proxy service that mediates access to the specific application, verifying identity and device health at each connection, and routes only the traffic for that application through the authorized path. An attacker who compromises credentials in a ZTNA architecture gets access to the specific applications those credentials are authorized for. They do not get implicit access to the rest of the network.

The performance argument is also real. VPNs were designed to route all traffic back through a central concentration point, typically a VPN gateway at headquarters or in a data center. When employees are accessing SaaS applications that are hosted in cloud regions geographically distant from that gateway, their traffic takes an unnecessarily long route. A user in London accessing a Salesforce instance hosted in a European AWS region should not have their traffic routed through a VPN gateway in New York. ZTNA implementations can connect users directly to cloud-hosted applications from the nearest point of presence, reducing latency significantly. The 37 percent of organizations reporting daily or weekly employee complaints about remote access and network security in the Tailscale survey reflects the accumulated frustration with VPN performance that has built up over years of cloud adoption without corresponding VPN architecture updates.

The Lateral Movement Problem: What Happens After the Attacker Gets In

Lateral movement is the phase of an attack that happens after initial access and before the actual damage. An attacker who has compromised a single account or endpoint through phishing, a software vulnerability, or a stolen credential typically does not have access to the specific system they want to target. They have a foothold somewhere in the network. They need to move from that foothold to the assets that are actually valuable: the customer database, the financial records, the backup systems, the code repositories.

In a traditional perimeter-based network, lateral movement is relatively easy once initial access is achieved. Flat networks, where all systems can communicate with each other by default, are common in legacy enterprise environments because they were designed for convenience rather than security. An attacker who compromises an employee laptop can probe other systems on the same network segment, find systems with known vulnerabilities, exploit them, and progressively gain access to more sensitive resources. This process typically takes hours to days in a flat network. It has happened this way in most of the major enterprise breaches of the last decade.

Zero Trust addresses lateral movement through a combination of the least privilege access model and micro-segmentation. If the compromised laptop has no network-level connectivity to the database servers, an attacker who has taken over that laptop cannot reach the databases regardless of what vulnerabilities exist in those systems. The access policy prevents the connection before any vulnerability can be exploited. This is the reason Gartner found that organizations implementing Zero Trust mitigate up to 25 percent of overall enterprise risk. The reduction in lateral movement opportunity is the single largest contributor to that risk reduction.

The breach cost difference: According to Zero Trust Security statistics, organizations with Zero Trust architectures reduce the average cost of a data breach by approximately $1 million compared to organizations without it. IBM’s Cost of a Data Breach Report has consistently found that the average enterprise breach costs over $4 million. A $1 million reduction is a 25 percent improvement on one of the most expensive security outcomes a company can face.

Micro-Segmentation: The Technique That Stops Attackers from Spreading

Micro-segmentation is the network architecture technique that operationalizes the Zero Trust principle of least privilege at the infrastructure level. Instead of one large flat network or a small number of coarsely defined network zones, micro-segmentation creates very fine-grained network boundaries where each workload, application, or service communicates only with the specific other workloads it needs to communicate with to function.

In a micro-segmented network, the payroll application talks to the payroll database and nothing else. The web server talks to the application server and the load balancer and nothing else. The employee laptop talks to the specific SaaS applications and internal services the employee’s role requires and nothing else. Every connection that is not explicitly permitted in policy is blocked by default. This is the network equivalent of the least privilege access principle applied at the traffic flow level rather than the user permission level.

The implementation of micro-segmentation has traditionally been painful. Mapping all the legitimate traffic flows in a complex enterprise environment, translating them into firewall rules, and keeping those rules current as applications change is operationally intensive. This is one reason micro-segmentation was widely recommended for years before being widely deployed. Software-defined networking and cloud-native tools have made it significantly more tractable in the last few years. AWS Security Groups, Azure Network Security Groups, and Kubernetes Network Policies all implement micro-segmentation natively for cloud workloads. For on-premises environments, software-defined perimeter products from Zscaler, Palo Alto, and Illumio handle much of the policy management complexity that made manual firewall rule management impractical at scale.

The result in production is not just better security. It is better incident response. When a breach does occur in a micro-segmented environment, the blast radius is confined to the segment where the initial compromise happened. Security teams can identify exactly which workloads were reachable from the compromised system and scope the investigation accordingly. The difference between a breach that touches three systems and a breach that touches three hundred systems is not just the immediate damage. It is weeks of forensic investigation time, regulatory notification requirements, and customer notification obligations that scale with the scope of the exposure.

SASE: When Zero Trust Meets the Cloud

Secure Access Service Edge, pronounced “sassy,” is the architecture that emerges when you take Zero Trust principles and apply them to the reality of a workforce that is distributed across homes, offices, and mobile locations, accessing applications that are distributed across cloud providers, SaaS platforms, and on-premises data centers.

Gartner coined the term in 2019 and defined it as the convergence of wide area networking and network security functions into a single cloud-delivered service. In practical terms, SASE combines several capabilities that were previously separate products: software-defined WAN for optimizing connectivity, Cloud Access Security Broker for visibility into SaaS usage, Secure Web Gateway for filtering web traffic, Zero Trust Network Access for replacing VPN, and Firewall-as-a-Service for policy enforcement. Instead of routing traffic through a central hardware appliance at headquarters, SASE delivers all of these functions from cloud points of presence that are geographically close to users and the applications they access.

The reason SASE gained traction so quickly after 2020 is that the pandemic-driven shift to remote work exposed the fundamental mismatch between hub-and-spoke VPN architectures and a workforce where the hub was suddenly less relevant than the spokes. Organizations that had been planning gradual migrations to cloud-native security architectures were forced to accelerate when their VPN infrastructure became the primary connectivity mechanism for 100 percent of their workforce simultaneously. Many of those VPN systems were not designed for that load and performed poorly under it, which made the performance argument for SASE immediate and concrete rather than theoretical.

The SASE market has grown quickly enough that it is now a core consideration in enterprise networking decisions rather than a forward-looking architecture experiment. Cisco, Palo Alto Networks, Zscaler, and a range of other vendors have built comprehensive SASE platforms that enterprises are deploying at scale. The complexity of managing multiple point products in the traditional enterprise network stack, separate VPN, separate web proxy, separate DLP, separate CASB, is a real operational burden that a unified SASE platform reduces.

The Real Numbers: What Zero Trust Actually Delivers

The market size numbers for Zero Trust tell the adoption story. The global Zero Trust architecture market was valued at $34.5 billion in 2024 and is projected to reach $84 billion by 2030, growing at 16.5 percent annually according to Grand View Research. A separate estimate from Expert Insights puts the 2025 market at $38.37 billion growing to $86.57 billion by 2030 at 17.7 percent CAGR. The spread between these estimates reflects different market definition boundaries, but the direction and magnitude are consistent across all major research firms: this is one of the fastest-growing segments in enterprise technology.

The adoption data is similarly consistent. Gartner found 63 percent of organizations worldwide had implemented Zero Trust either partially or fully as of their most recent survey. A survey of over 2,200 IT and business leaders found 46 percent in the process of moving to a Zero Trust model and 43 percent already operating on Zero Trust principles, leaving only 11 percent with no current Zero Trust implementation. The 96 percent favorability rate from the Zscaler survey, where virtually every organization that had evaluated Zero Trust said they favored the approach, reflects an unusually high level of consensus for an enterprise security architecture.

The outcome data is what makes the adoption trend self-reinforcing. Organizations that completed Zero Trust migrations reported improved security and compliance as the primary advantage at a 76 percent rate in Zscaler’s survey. The breach cost reduction of approximately $1 million per incident creates a financial case that is straightforward to make to CFOs who are accustomed to evaluating security investments on risk-adjusted return. The NIST framework’s own assessment that Zero Trust adoption typically addresses 50 percent of an organization’s environment and mitigates 25 percent of overall enterprise risk, while seeming modest, represents a substantial reduction in the actual risk profile that a security architecture creates.

| Model | Trust assumption | Access model | Failure mode | Where it works |

|---|---|---|---|---|

| Perimeter (Castle and Moat) | Inside = trusted, outside = untrusted | Broad network access once inside | Perimeter breach = attacker has full lateral movement | Small office, fully on-premises, pre-cloud era |

| VPN Extended Perimeter | VPN session = trusted, same as inside | Full network tunnel into corporate segment | Compromised VPN credential = full network access | Hybrid workforce before cloud adoption scaled |

| Zero Trust (ZTNA) | Nobody trusted by default, verify everything | Per-application, per-session, least privilege | Compromised credential = access only to permitted apps | Cloud-first, distributed workforce, hybrid environments |

| SASE | Zero Trust + unified cloud delivery | All security functions cloud-delivered near user and app | Service provider outage, policy misconfiguration | Fully distributed organizations with heavy SaaS use |

The Parts Nobody Warns You About

Zero Trust architectures are not fast to implement or cheap to operate, and the coverage tends to understate both. The most consistently cited implementation challenge, reported by 49 percent of organizations in StrongDM’s cloud security survey, is maintaining consistent security policies across diverse and heterogeneous environments. An organization that has on-premises systems, multiple cloud providers, dozens of SaaS applications, and a mix of managed and unmanaged devices needs to define and enforce Zero Trust policies across all of them using tools that were often designed independently and do not integrate natively.

The identity foundation is where many implementations run into unexpected depth. Moving to Zero Trust requires knowing, with confidence, who every user is, what every device is, and what every application is at a level of completeness that most organizations discover they do not actually have. Shadow IT, the SaaS applications purchased by business units without IT involvement, creates identity and access blind spots that are invisible until you try to map them. Unmanaged devices, contractors using personal equipment, shared service accounts with unclear ownership: these are all real inventories that organizations discover are larger and messier than expected when they start building the asset map that Zero Trust policy enforcement requires.

Organizational change is the hardest part that appears least in technical documentation. Zero Trust requires networking and security teams to think and operate differently. It requires application teams to understand and cooperate with policy definitions that affect their applications. It requires end users to accept additional authentication steps that are more friction than the VPN they already complained about. The survey data showing 22 percent of organizations faced internal resistance during Zero Trust adoption reflects a real deployment dynamic. The security argument is clear. The process change that implementing it requires is not always welcome.

The people who have done these migrations successfully share a consistent approach: they start with the highest-risk assets, not with the entire environment. A new critical application going into production is a natural starting point for Zero Trust policies, much easier than retrofitting a twenty-year-old on-premises system. Legacy applications that do not support modern authentication protocols require additional infrastructure, typically an identity proxy that sits in front of the application and handles the modern authentication on its behalf. That adds complexity and is sometimes not feasible for particularly old systems. Organizations that tried to boil the ocean and migrate everything simultaneously are the ones that ended up with long, painful projects. Organizations that started with a defined scope and expanded methodically are the ones that report successful deployments without major delays.

Where to Start If Your Organization Is Still Running on Implicit Trust

If your organization is still running primarily on VPN-based remote access with broad network trust, you are in a large and rapidly shrinking minority. Sixty-three percent of organizations are already partially or fully on Zero Trust. The question is not whether to move but how to prioritize the migration given real constraints of time, budget, and operational risk.

The highest-return first step is enforcing multi-factor authentication everywhere, without exceptions. It is the single most effective control against credential-based attacks, it is foundational to every Zero Trust architecture, and it can be deployed incrementally without changing the underlying network architecture. Organizations that have not yet enforced MFA across all administrative access, remote access, and cloud applications are leaving the most accessible security improvement on the table regardless of where they are in a broader Zero Trust journey.

The second highest-impact action for most organizations is a Zero Trust Network Access evaluation for VPN replacement, starting with the remote access use case that is most painful today. Most organizations have at least one VPN scenario that is either performing poorly, generating regular support tickets, or has been involved in a security incident. That scenario is the right place to pilot ZTNA because the baseline for comparison is something that is already causing problems, which makes the improvement visible and measurable.

For developers and engineering teams specifically, the most immediate Zero Trust practice to adopt is treating your development environment as if it were production from an access control perspective. Developer machines are extremely high-value targets for attackers specifically because developers often have broad access to production systems, source code, and infrastructure credentials. Applying least privilege access controls to developer tooling, rotating credentials regularly, and using short-lived tokens rather than long-lived API keys are Zero Trust practices that individual developers and small teams can implement without organizational-wide programs. GlassWorm, the active supply chain attack described in CyberDevHub’s article from this week, specifically targeted developer credentials precisely because compromising a developer environment often provides a path into everything else.

The Zero Trust market at $38 billion and growing at 17 percent annually is not driven by vendor hype. It is driven by the consistent experience of organizations that tried to defend a perimeter that no longer exists and found that it did not work. The architecture that replaced it is not simpler to operate. It is more honest about the threat model and more effective at limiting the damage when the inevitable breach occurs.

What is your organization’s current state on this: fully on perimeter-based trust, somewhere in the middle of a Zero Trust migration, or operating a mature Zero Trust environment? The specific challenge people are hitting in the middle of migrations is the most useful thing to discuss in the comments, because that is where the practical knowledge that does not appear in vendor documentation actually lives.

References (March 2026):

Zscaler ThreatLabz 2025 VPN Risk Report (600+ IT professionals, VPN breach statistics, ZTNA adoption): cio.com

NIST Special Publication 800-207: Zero Trust Architecture (2020): nvlpubs.nist.gov

Grand View Research: Zero Trust Architecture Market, $34.5B (2024) to $84.08B (2030): grandviewresearch.com

Expert Insights: Zero Trust Adoption Statistics 2025 (Gartner 63% adoption figure): expertinsights.com

Tailscale State of Zero Trust Report 2025 (1,000 IT/security/engineering professionals): tailscale.com

StrongDM: State of Zero Trust Security in the Cloud (600 cybersecurity professionals): strongdm.com

INE Top 5 Networking Trends 2026 (ZTA as foundational): ine.com

Sixty-three percent of organizations have already moved away from trusting their own networks.

The remaining thirty-seven percent are not more secure. They are just slower.

Leave a Reply